Customer support QA programs often feel punitive and disconnected, but they don’t have to be. By shifting the focus from catching mistakes to skill-building, you can improve both performance and morale. Modern QA tools, especially AI, allow you to evaluate 100% of interactions quickly and affordably, giving agents real-time feedback and actionable insights. Here’s how to make it work:

- Stop random sampling: Analyze every ticket with AI for as little as $0.01–$0.05 per interaction.

- Deliver timely feedback: Use real-time dashboards to address issues within hours, not months.

- Collaborate with agents: Involve them in QA planning, self-assessments, and calibration sessions.

- Set clear standards: Replace vague criteria with measurable, weighted metrics.

- Reward growth: Recognize achievements with specific praise, public acknowledgment, and career opportunities.

- Automate routine tasks: Let AI handle repetitive checks so human reviewers can focus on nuanced coaching.

The takeaway: QA should feel like a coaching tool, not a punishment. With the right approach, you’ll improve customer satisfaction, reduce agent burnout, and create a motivated team.

Customer Service QA Calibration Sessions – Part 1: Three approaches [Online Course]

sbb-itb-e60d259

Shifting QA from Punishment to Growth

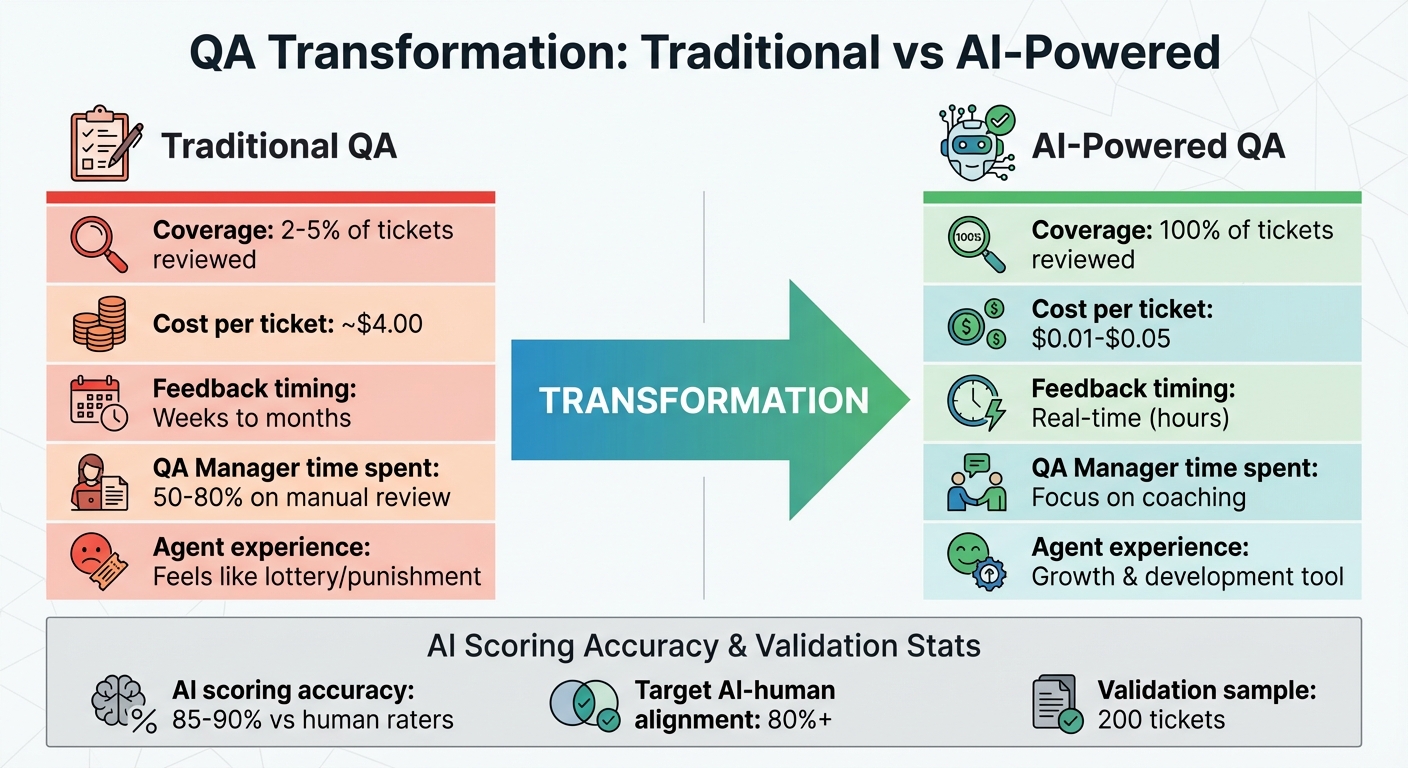

Traditional vs AI-Powered Customer Support QA: Cost and Coverage Comparison

Why Most QA Programs Hurt Morale

Let’s dive into why traditional QA programs often end up damaging team morale instead of boosting it.

Most QA systems only review a tiny percentage of customer interactions[3][1]. For agents, this feels like a lottery. Imagine handling hundreds of tickets in a week, only to have one random interaction picked apart. If that single interaction happens to be an outlier, agents can feel unfairly judged, which breeds frustration.

The problem doesn’t stop there. Feedback in manual QA systems is notoriously slow, sometimes taking weeks or even months to reach the agent[3]. By then, the interaction feels like ancient history, making it hard for agents to connect the feedback to their actions or use it to improve.

Even QA managers face challenges. They spend a staggering 50%–80% of their time manually reviewing tickets[3]. This leaves little room for meaningful coaching or team development. Instead, QA often devolves into a process that focuses on catching mistakes. For agents, it starts to feel like something to endure rather than a tool to help them grow.

Shifting QA from this punitive model to one centered on development can unlock real potential – both for individual agents and overall operations.

Using QA to Build Skills and Improve Operations

The key to transforming QA lies in moving from quality control (reactive error correction) to quality assurance (proactive skill-building). This mindset shift turns QA into a coaching tool rather than a policing mechanism.

Modern, AI-driven QA systems can analyze every single customer interaction for just $0.01 to $0.05 per ticket[1]. This comprehensive approach eliminates the randomness of traditional sampling. Managers can now identify patterns, like an agent whose performance dips on specific days or someone who struggles with certain tasks, such as refund requests. Instead of vague feedback like "be more empathetic", managers can provide actionable, data-driven advice tailored to each agent.

When QA becomes a tool for growth, agents start to see it differently. High scores can lead to tangible rewards, such as the ability to self-evaluate, promotions to senior roles, or opportunities to mentor newer team members. Agent performance metrics shift from being a source of anxiety to a ladder for career progression – a motivator rather than a threat.

This approach not only addresses the flaws in outdated QA systems but also sets the stage for broader strategies around agent recognition and career development, which we’ll explore further in the article.

Designing a QA Program Agents Will Trust

Building trust in a QA program starts with transparency, collaboration, and fairness. If agents feel QA is imposed on them rather than developed alongside them, it can harm morale. The key? Bring your team into the process from the start.

Include Agents in QA Planning

Involving agents early helps uncover unclear standards, tough scenarios, and effective ways to gather feedback. This shifts QA from feeling like a disciplinary tool to becoming a resource for professional growth. As Robert Half explains: "Transform customer service QA from something agents dread into something that helps them improve"[2].

One effective strategy is self-assessment – encouraging agents to review and score their own interactions before a manager steps in[2]. When agents identify their own areas for improvement, they tend to be less defensive and more receptive to coaching. Open calibration sessions can also be a game-changer, where both agents and reviewers discuss scoring discrepancies together. This collaborative effort aligns everyone on what quality service truly means[4].

Considering that only 12% of administrative and customer support leaders in 2026 report having the resources and skills for priority projects[2], empowering agents to take ownership of their development becomes even more critical.

Set Clear and Measurable Standards

After agents contribute to shaping the QA process, defining precise metrics ensures everyone knows what’s expected. Vague criteria like "be professional" or "show empathy" can leave agents guessing. Instead, use clear, measurable behaviors. For instance, instead of saying "resolve the issue", ask: Did the agent provide a complete solution in one interaction? Or replace "be empathetic" with: Did the agent acknowledge the customer’s frustration?

Assigning weights to different criteria can also clarify priorities. For example, you might allocate 50% of the score to issue resolution, 30% to tone and empathy, and 20% to process compliance[1]. A simple scoring system can further enhance clarity: a 5 for exceeding expectations (like resolving the issue and offering a helpful tip), a 3 for meeting standards, and a 1 for an inadequate or inaccurate response[1].

Train QA Reviewers for Consistent Evaluations

Consistency is crucial in QA. Reviewers using the same rubric should arrive at similar scores for the same interaction. Aim for at least 80% agreement among reviewers on each scoring dimension[1]. Calibration exercises – where multiple reviewers score the same 200 tickets and compare results – can highlight inconsistencies and help refine the rubric’s language[1].

AI can also play a role in maintaining objectivity. Modern AI-driven sentiment analysis tools can detect tone, empathy gaps, and even condescension with 85–90% accuracy compared to human raters[1]. Let AI handle straightforward, binary checks – such as whether the agent used the customer’s name – while reviewers focus on more nuanced assessments. This balanced approach is a cornerstone of AI in customer support strategies. For example, a binary check might simply score 1 if the customer’s name was used and 0 if it wasn’t[1]. Clearly separating objective checks from subjective evaluations ensures a fair and balanced approach.

Running QA Without Overloading Your Team

A well-thought-out QA program should enhance quality without adding unnecessary pressure on your team. If QA feels like just another task piled onto an already full plate, it can have the opposite effect – leading to disengaged agents and a drop in morale.

Provide Real-Time Coaching and Recovery Time

Traditional QA systems often deliver feedback too late to make a meaningful difference in day-to-day operations. Modern AI-driven QA flips this approach. As the Supportbench blog puts it, "Quality stops being a quarterly report and becomes a daily dashboard. You spot problems in hours, not months" [1]. This allows managers to address issues as they come up, rather than waiting for negative feedback from surveys.

Real-time monitoring can uncover patterns – like a noticeable drop in tone scores on Tuesday afternoons – giving managers the opportunity to take immediate action [1]. With this kind of insight, they can adjust workloads, schedule breaks, or provide targeted coaching to prevent burnout. This ensures agents have the breathing room they need, even during demanding times.

By operating real-time customer service, you create a foundation for using automation to further lighten the workload.

Automate QA Tasks with AI Tools

Real-time insights are just the beginning. Automation takes things a step further by handling repetitive QA tasks at scale. For instance, while a human QA review might cost around $4.00 per ticket, AI can score a ticket for roughly $0.03, making it possible to evaluate a much larger volume of interactions at a fraction of the cost [1].

Platforms like Supportbench use AI to automatically assess tone, completeness, and adherence to processes across all tickets. Instead of relying on random sampling, human reviewers can focus on exceptions – like unusually short interactions, reopened cases, or tickets flagged for quality concerns. AI handles routine checks, such as verifying a customer’s identity, while human reviewers focus on more nuanced tasks, like evaluating whether an agent went above and beyond. As the Supportbench blog explains, "AI QA doesn’t replace human reviewers. It changes what they review and why" [1].

With tools like GPT-4 or Claude, AI scoring costs range between $0.01 and $0.05 per ticket [1]. To ensure accuracy, start by testing AI on 200 previously human-scored tickets and refine the system until AI and human scores align more than 80% of the time [1]. Gradually roll out AI, allowing reviewers to compare AI-generated and human scores over 2–3 months to build confidence in the system.

Maintain Standards Without Micromanaging

Effective QA doesn’t mean hovering over every interaction. A balanced approach ensures quality while respecting agents’ independence. When expectations are clear and agents have the tools they need, they can take ownership of their work without constant oversight. For example, if an agent consistently meets resolution goals and maintains strong tone scores, minor workflow variations become less of a concern.

AI-powered dashboards give managers a big-picture view of patterns, such as dips in quality at specific times or areas where additional training is needed, without requiring them to micromanage every ticket [1]. Structured QA programs that include weekly coaching can improve consistency within just 4–6 weeks [5].

Using QA Data to Motivate and Develop Agents

When QA data is used thoughtfully, it becomes more than a performance metric – it transforms into a powerful tool for growth. Instead of focusing solely on catching errors, QA programs can shift to celebrating progress, recognizing achievements, and fostering skill development. This approach not only motivates agents but also opens doors to long-term career opportunities.

Recognize and Reward Performance Gains

Generic praise doesn’t cut it. Agents thrive when recognition is specific and meaningful. Use QA data to highlight concrete achievements, like resolving a tricky technical issue, maintaining flawless policy compliance for an extended period, or consistently excelling in empathy scores [6][7]. This kind of targeted acknowledgment helps agents understand exactly what they’re doing right, encouraging them to replicate those behaviors.

Public recognition can amplify this impact. Whether it’s a shoutout in team meetings or a spot on gamified leaderboards, celebrating milestones – like an agent’s first perfect score on a challenging ticket – boosts morale and engagement [6][7]. Some teams even introduce point systems tied to QA metrics, adding an element of fun and competition.

For top performers, consider offering autonomy-based rewards. Options like choosing preferred shifts, taking on special projects, or selecting tasks empower agents and build trust. These rewards not only acknowledge excellence but also set the stage for collaborative learning through peer coaching.

Set Up Peer Coaching Programs

Your most skilled agents are already a treasure trove of knowledge. With insights from QA data, you can identify those who excel in specific areas – whether it’s technical precision, empathy, or managing escalations – and involve them in peer coaching.

Peer coaching works best when it’s structured around real learning opportunities. AI tools can flag standout interactions or tricky cases for group review, turning them into teachable moments. Instead of relying on theoretical scenarios, agents can analyze real-world examples, fostering a collaborative learning environment. Encourage agents to first review their own interactions using QA scorecards; this self-assessment makes coaching discussions more productive and less intimidating.

Building a library of exemplary interactions identified through QA can further enhance learning. These “gold-standard” examples serve as templates for what excellent service looks like across various situations. As Justin Robbins, Founder & Principal Analyst at Metric Sherpa, puts it:

"The best contact centers treat QA as a team effort. QA analysts, coaches, and supervisors collaborate to set shared performance standards, identify gaps, and run post-training reinforcement" [8].

This collective approach not only strengthens individual skills but also builds a sense of shared expertise, paving the way for career advancement.

Connect QA Performance to Career Advancement

QA excellence should lead to bigger opportunities. Clear career paths – like moving from customer service roles to positions such as Quality Analyst, Trainer, Team Lead, or Workforce Management – give agents a tangible goal to work toward [9]. Make these pathways visible so agents understand how their efforts today can shape their future.

Advancement isn’t just about hitting high QA scores; it’s about expanding influence. Junior agents focus on their own tasks, mid-level agents mentor peers, and senior agents drive larger initiatives or even company-wide strategies [10]. QA data can help identify those ready for the next step, offering them chances to broaden their impact.

Encourage agents to document their wins, peer support efforts, and standout moments. These records become invaluable during promotion discussions [9]. It’s worth noting that 63% of workers leave jobs due to a lack of growth opportunities [7]. Aligning QA outcomes with career development can improve both operational performance and employee retention.

For example, TTEC, a global leader in customer experience, boasts a 4.1 out of 5 employee satisfaction rating on JobStreet by prioritizing internal mobility and development [9]. They recognize that by 2026, career growth will increasingly depend on how well agents collaborate with AI tools. Skills like AI-assisted knowledge searches, sentiment analysis, and digital fluency will become essential, while empathy, judgment, and critical thinking will make agents indispensable as AI handles routine tasks [9].

Updating Your QA Program Over Time

QA isn’t something you can set up once and forget about. Customer expectations and priorities change quickly. In fact, 99% of customer service experts agree that customer expectations are higher now than ever [11]. To keep your QA program effective and fair, treat it as an evolving process that adapts to real feedback and performance data.

Collect Regular Feedback from Agents

Agents are on the front lines of QA, so their feedback is invaluable. Schedule regular sessions – weekly or bi-weekly – to gather actionable insights. Collaborative review sessions allow teams to discuss edge cases and clarify vague criteria in a relaxed setting. These discussions often reveal areas where scoring standards might be unclear or where evaluators interpret guidelines inconsistently.

During training, hold open Q&A sessions to address any confusion about new QA standards. This ensures agents can resolve ambiguities before those standards are finalized. For AI-driven QA, establish a feedback loop where human reviewers compare their scores with AI outputs for 2–3 months, aiming for at least 80% alignment [1]. When rolling out AI scoring, validate its accuracy by reviewing at least 200 tickets [1]. This step helps catch potential biases and ensures the AI doesn’t miss important nuances.

These feedback mechanisms are essential for refining your QA processes over time.

Refine QA Processes Based on Data and Feedback

Use the feedback and data you’ve gathered to adjust your QA measures so they stay aligned with your business goals and customer needs. Review your QA processes every 3 to 6 months to reflect shifting priorities. For example, a program initially focused on speed might later prioritize first-contact resolution or empathy. Adjust the weight given to different scoring categories accordingly.

Involve a variety of stakeholders in this process. Team leads can share performance insights, QA analysts ensure consistency, and agents offer practical perspectives. Host collaborative workshops – short, 60-minute sessions – to align everyone on what "quality" means before updating scorecard criteria. Before implementing major changes, run pilot programs with a small group (10–20% of agents) for 3–4 weeks. This helps identify unclear criteria or potential friction points.

Replace vague terms like "good communication" with specific, measurable behaviors such as "used jargon-free language" or "confirmed customer understanding before closing." Clearer criteria make scoring more consistent and give agents concrete goals to aim for. As HelpDesk puts it, "Expectations are an ever-moving target, so your team and approach must continuously adapt to meet them" [11]. By refining your QA program based on real-time data and feedback, you can maintain both quality and team morale.

Conclusion

A poorly designed QA program can do more harm than good, undermining both morale and performance. The real value of QA lies in shifting the focus from punishment to growth – using evaluations to build skills, address process gaps, and support your team. When agents feel QA is there to help them improve rather than just catch their mistakes, it leads to better performance and higher engagement.

Traditional QA programs often rely on random sampling, reviewing only 2–5% of tickets. But AI-powered QA changes the game by offering full visibility into performance trends. This approach frees up human reviewers to focus on providing targeted coaching and making nuanced decisions, such as identifying early signs of burnout or spotting training needs more quickly.

For a successful QA program, start with clear, collaboratively developed rubrics and use data to deliver specific, actionable feedback. For instance, instead of saying, "Be more empathetic", you could highlight, "Your tone scores on refund tickets are 20% below the team average." This kind of precise feedback helps agents understand exactly where to improve. Celebrate performance improvements publicly, link QA outcomes to career growth, and treat quality monitoring as an ongoing process rather than a quarterly task. With AI tools, this becomes even easier, as they seamlessly integrate into your workflows.

Modern platforms like Supportbench combine automated scoring with targeted coaching, offering features like sentiment analysis, predictive CSAT, and first-contact resolution detection – all without hidden costs or per-seat fees. These tools help keep your team motivated, supported, and focused on delivering top-notch customer experiences.

Of course, even the best QA programs need regular updates. Collect feedback from agents, refine processes every three to six months, and ensure automated scoring aligns with human judgment to maintain trust. A well-executed QA program doesn’t just improve numbers – it fosters a culture where quality and morale thrive together.

FAQs

How do I roll out AI QA without agents feeling surveilled?

To introduce AI-powered QA in a way that avoids agents feeling like they’re under constant surveillance, focus on open communication, teamwork, and showcasing its advantages. Make it clear that AI is there to enhance performance and pinpoint areas for growth – not to micromanage. Engage agents by breaking down how AI scoring works and explaining its role in personal development. Incorporate AI-driven insights into coaching sessions to encourage progress, highlighting both strengths and opportunities. When AI is presented as a tool for support, it helps build trust and keeps morale high.

What QA metrics should we prioritize for our support team?

When it comes to quality assurance, there are a few metrics that deserve special attention: resolution quality, tone, accuracy, and process adherence.

- Resolution quality ensures that customer issues are effectively addressed.

- Tone – which includes empathy and professionalism – helps maintain a positive customer experience.

- Accuracy is all about providing correct information and solutions.

- Process adherence ensures that team members follow established protocols.

These metrics not only reflect customer satisfaction but also ensure compliance with operational standards.

Leveraging AI tools to evaluate these areas can make assessments more consistent and objective. This approach can also create a fairer and more supportive environment for your team.

How do we validate AI QA scores against human reviews?

To ensure AI quality assurance (QA) scores align with human evaluations, calibration sessions play a key role. During these sessions, teams review and discuss sample interactions, ironing out inconsistencies and setting clear expectations.

AI systems can also identify unusual patterns or anomalies, flagging them for human review. This creates a feedback loop where AI and human assessments are regularly compared.

By routinely revisiting scoring criteria and involving key stakeholders in the calibration process, organizations can maintain consistency between AI evaluations and human standards. This approach supports fairness and accuracy in assessments over time.

Related Blog Posts

- How do you build a QA scorecard for support (with examples and scoring templates)?

- How do you coach agents using QA data without killing morale?

- How do you calibrate QA scoring across managers so it’s fair and consistent?

- How do you measure support quality beyond QA scoring (and what to track instead)?