AI can be fast, but it’s not always accurate. In high-stakes B2B workflows, one error can cost thousands or damage relationships. That’s where Human-in-the-Loop (HITL) comes in – a system where humans oversee and refine AI outputs, ensuring better accuracy, consistency, and personalization.

Key Benefits of HITL:

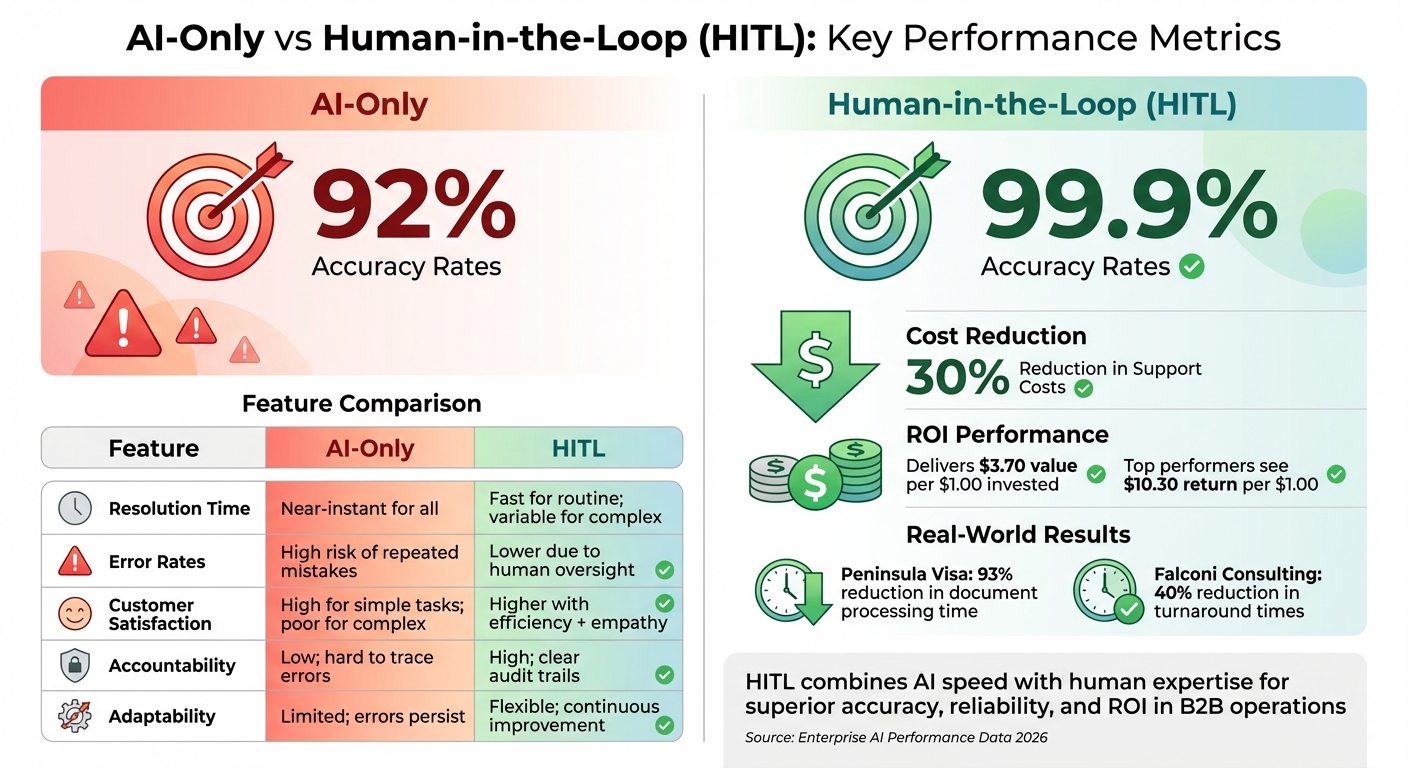

- Accuracy Boost: HITL workflows achieve 99.9% accuracy compared to 92% for AI-only systems.

- Error Prevention: Human oversight intercepts mistakes before they escalate.

- Cost Efficiency: Reduces long-term costs by avoiding expensive errors.

- Improved Customer Experience: Combines AI speed with human empathy and context.

By flagging uncertain AI outputs for human review, HITL creates a feedback loop where human corrections improve AI over time. This balance allows AI to handle repetitive tasks while humans focus on complex cases. Companies like Peninsula Visa have cut processing times by 93% using HITL, showing how it enhances both efficiency and reliability in B2B support workflows.

AI-Only vs Human-in-the-Loop Systems: Performance Comparison

How to Build Human-in-the-Loop for AI Agents (Practical Guide)

sbb-itb-e60d259

What Is Human-in-the-Loop AI?

Human-in-the-Loop (HITL) is a system where human oversight is integrated into AI workflows, especially for decisions with higher stakes or uncertainty [1]. Instead of allowing AI to operate entirely independently, HITL ensures that outputs flagged as uncertain or risky are reviewed and adjusted by human agents. This approach allows AI to handle repetitive, high-volume tasks like data processing and pattern recognition, while humans step in for decisions requiring judgment, context, or accountability. HITL is especially important in maintaining quality and reliability in AI-driven B2B support operations.

HITL doesn’t slow down automation but uses a method called confidence-based routing to focus human involvement where it’s needed most. High-confidence AI predictions (typically over 90%) are processed automatically, while outputs with confidence levels between 50% and 89% are flagged for human review [1]. These human corrections are then used to train the AI, helping it learn from thousands of decisions and gradually reducing the need for manual intervention over time [9].

"Human-in-the-loop is not a limitation or a compromise. It is a design pattern that makes AI systems more capable, more reliable, and more trustworthy." – HumanOps Team [1]

The true strength of HITL lies in its ability to minimize risk. Fully automated systems can repeat errors at scale, potentially causing widespread issues before anyone intervenes [4]. HITL acts as a safeguard, preventing these large-scale failures. For example, Peninsula Visa achieved a 93% reduction in document processing time, while Falconi Consulting cut turnaround times by 40%, both by using HITL workflows. These systems allowed AI to handle routine tasks while routing complex cases to human staff for resolution [9]. This balance between automation and human input is what makes HITL so effective.

Core Principles of HITL

HITL operates on three foundational principles: collaboration, feedback loops, and continuous improvement.

- Collaboration: Humans and AI work together as a team. AI takes care of repetitive, high-volume tasks, while humans handle edge cases, ambiguous scenarios, and decisions requiring empathy or compliance expertise.

- Feedback Loops: Every human intervention becomes a learning opportunity for the AI. When a human corrects an AI-generated response, that correction is logged and used to refine the system, whether by adjusting prompts, modifying confidence thresholds, or improving decision-making accuracy [9].

- Continuous Improvement: Over time, as the AI learns from human input, the need for manual reviews decreases. Organizations monitor override rates to determine when the AI needs recalibration. The aim isn’t to eliminate human involvement entirely but to ensure that human expertise is focused where it matters most.

AI-Only vs. HITL: Key Differences

The principles behind HITL highlight its advantages over fully automated systems. Here’s how the two approaches compare:

| Feature | AI-Only (Fully Automated) | Human-in-the-Loop (HITL) |

|---|---|---|

| Resolution Time | Near-instant for all tasks | Fast for routine tasks; variable for complex cases |

| Error Rates | High risk of repeated mistakes or failures [4] | Lower due to human oversight |

| Customer Satisfaction | High for simple tasks; poor for complex or emotional issues | Higher, blending efficiency with human empathy |

| Accountability | Low; errors can be hard to trace | High; human reviews create clear audit trails [9] |

| Adaptability | Limited; errors persist without retraining [9] | Flexible; improves continuously through feedback [9] |

Benefits of HITL for B2B Support Operations

HITL (Human-in-the-Loop) systems bring a unique advantage to B2B support by addressing two critical challenges: maintaining accuracy at scale and managing costs without compromising quality. Unlike fully automated systems, which can magnify errors across thousands of cases, HITL minimizes the impact of mistakes. This is particularly important in B2B settings, where errors – like sending incorrect contract terms or mishandling a technical escalation – can strain or even jeopardize valuable relationships.

By reducing the risks tied to automation, HITL also delivers long-term savings. As the HumanOps Team puts it:

"The marginal cost of human review is almost always less than the expected cost of the errors it prevents."[1]

In industries like finance or healthcare, where compliance is non-negotiable, the cost of a regulatory misstep far outweighs the expense of human validation. Additionally, HITL workflows generate audit trails, documenting every human intervention. These records can be pivotal during disputes or regulatory reviews, turning what might have been one-off fixes into long-term assets[9]. This combination of accuracy, tailored customer experiences, and cost management makes HITL a powerful tool for B2B support.

Better Accuracy and Consistency

Human oversight ensures that AI outputs meet the high standards required in B2B operations. Whether it’s reviewing contracts, troubleshooting technical issues, or interpreting policies, human involvement acts as a safeguard for critical decisions. This extra layer of review catches errors that AI might miss, like misjudging sentiment, misusing technical terms, or violating policy guidelines[1].

Confidence-based routing streamlines this process. AI assigns confidence scores to its outputs, and responses below a set threshold (e.g., 70%) are flagged for human review[7][10]. High-confidence outputs proceed automatically, while uncertain cases are escalated to humans. To maintain consistency, standardized rubrics score outputs on factors like accuracy, policy compliance, and clarity[7].

The impact of structured HITL workflows can be significant. For instance, when Peninsula Visa adopted this approach in February 2026, they cut document processing times by 93% by routing exceptions to humans while allowing AI to handle routine tasks[9]. Over time, continuous feedback from human reviewers improves AI performance. As Norbert Sowinski of All Days Tech explains:

"The goal is not ‘add humans everywhere.’ The goal is operational reliability: reduce defects and policy violations while using feedback to steadily shrink manual volume over time."[7]

In mature HITL setups, AI can autonomously handle up to 95% of cases, leaving only the most complex 5% for human intervention[1].

More Personalized Customer Interactions

HITL doesn’t just improve accuracy – it also enhances how businesses engage with their customers. AI often lacks the context needed for nuanced interactions, such as understanding company history, internal terminology, or the finer details of ongoing deals. Human reviewers fill this gap by adding "context injection", ensuring responses are relevant and aligned with the company’s voice[11]. They also refine AI-generated drafts, adjusting tone and language to maintain a personable and professional touch[5][11].

The "bookend" approach is becoming increasingly popular. Here, human expertise is applied at the start to define strategies and at the end for quality assurance, while AI manages predictable tasks in the middle[11]. For example, Falconi Consulting implemented this workflow in early 2026, using role-based task routing to cut operational turnaround times by 40%[9].

Lower Costs Through Task Optimization

HITL optimizes costs by focusing human effort where it has the most impact. AI takes care of repetitive tasks like data entry, ticket categorization, and initial draft creation, while humans handle complex cases requiring judgment or empathy. This balance prevents costly mistakes, whether from over-relying on AI or paying humans to do tasks better suited for automation.

By combining AI-first labeling with human corrections, organizations can reduce manual effort by 70% to 80% compared to fully manual processes[10]. Confidence gating ensures that only uncertain or high-risk tasks are escalated for human review, making the process more efficient[7][10]. Batch reviewing similar flagged items further optimizes human involvement[7].

Over time, feedback loops refine AI performance, reducing the need for human intervention. Modern systems even allow agents to queue tasks and move on to other work while waiting for human input[1][7][9]. Chris Kontes, Co-Founder of Balto, highlights the value of this approach:

"Fully autonomous systems can scale quickly, but when they fail, they fail at scale. Human-in-the-loop automation reduces that exposure by applying human judgment precisely where risk, compliance, and customer experience demand it."[4]

How to Integrate HITL Workflows in B2B Support

To successfully integrate Human-in-the-Loop (HITL) workflows into B2B support, you need clear processes for routing, feedback, and role definition. The aim is to let AI handle repetitive, predictable tasks while reserving human effort for complex or sensitive situations. This requires systems that maintain context, track decisions, and improve through structured feedback. Without these elements, teams risk inefficiencies or losing the quality improvements HITL is designed to bring.

A good starting point is confidence-based routing. For example, flag AI outputs that fall below a 70% confidence threshold for human review, while allowing high-confidence results to proceed automatically [9][7]. This prevents reviewers from being bogged down with routine tasks, enabling them to focus on cases that need human insight. To ensure effective reviews, provide full context – this includes original inputs, AI outputs, customer history, and any relevant documents [9].

Structured feedback is another critical piece. Instead of relying on unstructured notes, use standardized codes like INCORRECT, INCOMPLETE, or UNSAFE to categorize issues [7]. Pair these with timestamps and reviewer IDs to support compliance and refine the system over time [9][8]. These practices not only streamline operations but also help maintain the high-quality standards expected in B2B support.

QA Scoring with Human Review

AI can generate initial quality scores, but human oversight ensures fairness and accuracy. While AI evaluates tone, completeness, and adherence to guidelines, it often misses subtleties like how well responses align with customer history. Human reviewers act as a safeguard, adjusting scores and giving feedback to improve AI’s future performance.

To keep reviews consistent, use standardized rubrics that define what constitutes an acceptable response in areas like correctness, completeness, and brand tone [7][8]. For high-volume workflows, a two-stage review system works well: a quick triage phase for basic approve/reject decisions, followed by a deeper review for complex or borderline cases [7]. Regular calibration sessions help reviewers stay aligned on rubric interpretations, reducing inconsistencies across the team [7][8].

Escalation Management: AI Flags, Humans Resolve

Building on quality assurance, effective escalation management uses AI to flag high-priority cases while humans handle resolution. AI can identify issues needing escalation through sentiment analysis, risk signals, and policy flags. For example, it can detect safety concerns, legal risks, or major customer satisfaction issues. Escalations should be categorized by priority: P0 for critical issues like safety or legal risks, P1 for customer-facing quality problems, and P2 for lower-risk internal matters [7][8]. This approach ensures human resources are directed toward the most impactful cases.

Dynamic service-level agreements (SLAs) further enhance this process. For instance, if a customer is nearing a renewal, the system can tighten SLAs to prioritize quicker responses and improve their experience. When escalations occur, agents need access to the full interaction history, including AI interpretations and prior decisions, to avoid uninformed actions [9]. By using dynamic SLAs and asynchronous task queues, you can prevent bottlenecks and ensure timely human intervention [1][7].

Sentiment Analysis and Response Editing

AI can analyze customer sentiment and draft responses, but human editors are essential for fine-tuning. AI may identify whether a customer feels frustrated, satisfied, or neutral and generate a response based on historical data and knowledge base content. However, it often lacks the context needed for a personalized reply. Human reviewers step in to add this context, adjust tone, and refine language to address the customer’s concerns directly.

This step is particularly important in B2B settings, where even small missteps – like overly casual language with a formal client – can harm trust. Balancing AI’s efficiency with human personalization is key to meeting the high expectations of B2B customers.

How to Implement HITL in AI-Native Platforms

To implement Human-in-the-Loop (HITL) workflows in AI-native platforms, you need a structured process that blends automation with human expertise. Platforms like Supportbench simplify this by offering built-in tools for confidence gating, routing, and feedback capture, removing the need for custom IT development.

Start by defining confidence thresholds to determine when AI can act independently and when human review is necessary. Set an upper threshold (U) for auto-approvals and a lower threshold (L) for auto-rejections, leaving a "gray band" in between that requires human oversight. For example, responses with 90% confidence or more could be auto-approved, while those below 50% are rejected outright. Anything in between would be flagged for human review. Running the AI in shadow mode can help fine-tune these thresholds safely [7][12].

"Ship the loop, then let the loop ship better decisions."

– Arafat Tehsin [12]

Next, configure routing rules based on specific triggers like validator failures, policy flags, customer tiers, or new customer intents. Pair these rules with structured feedback mechanisms, requiring reviewers to use standardized decision codes, timestamps, and reviewer IDs [7][9][12].

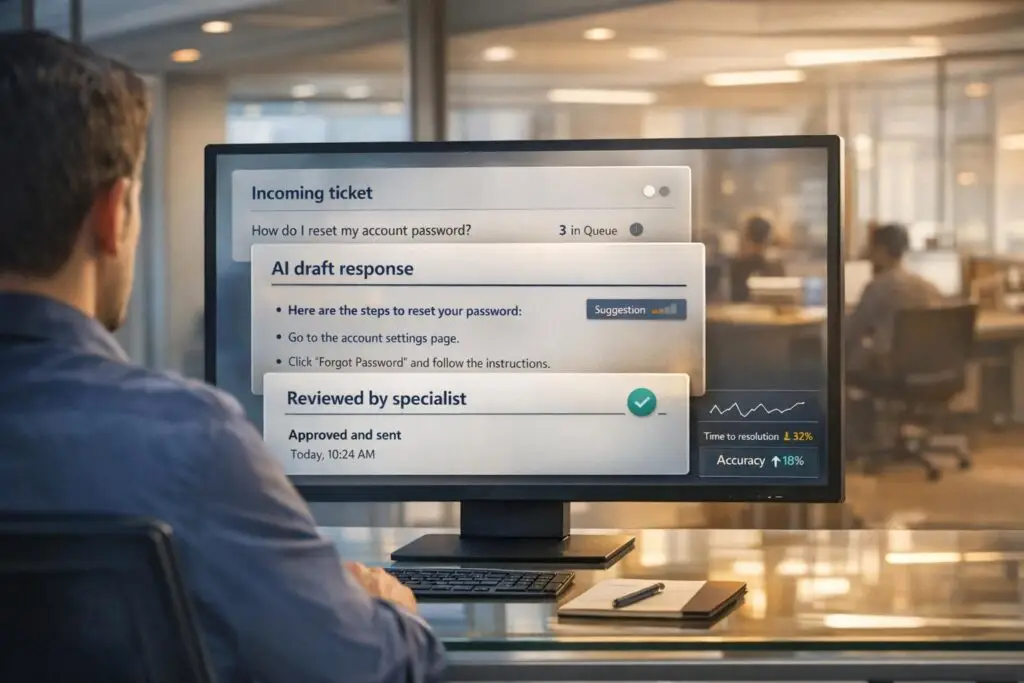

Configuring AI and Human Collaboration

AI-native platforms come equipped with tools like case summaries, predictive CSAT, sentiment analysis, and escalation workflows. The trick is to position human oversight gates at key decision points. For instance, AI might draft a response using knowledge base content and customer history, but a human reviewer ensures accuracy and appropriateness before it’s sent to the customer [2]. This "pre-decision approval" system minimizes errors while maintaining efficiency.

Supportbench includes AI features like ticket summaries, resolution suggestions, knowledge base searches, and QA insights. The platform can flag cases with low predicted CSAT scores for immediate human intervention, helping to prevent escalations. Dynamic SLAs further optimize operations by tightening response times for high-priority cases, such as those involving customers nearing renewal. This ensures human resources are focused where they’re needed most, maintaining quality through strategic involvement.

To maintain accuracy, audit 5–10% of high-confidence outputs to monitor errors (escape rate) and make adjustments to thresholds [2][7]. These measures create a balance between automation and cost-effective case management.

Automating Case Prioritization and FCR Detection

Automation also plays a key role in prioritizing cases and identifying First Contact Resolution (FCR) opportunities. AI can automatically tag cases by intent, assign issue types, and detect whether a response fully addresses all customer concerns, streamlining FCR detection [7][9].

Priority-based routing ensures critical cases are quickly assigned to the right people. For instance, risk-based prioritization can categorize cases into tiers: P0 for safety or legal issues, P1 for standard customer concerns, and P2 for lower-risk internal matters [7]. To avoid bottlenecks, asynchronous task queues allow AI to continue processing other cases while waiting for human input [1][7].

HITL Workflow Stages and Roles

Clearly defined roles at each stage of the workflow prevent confusion and ensure smooth operations. Below is an outline of how AI and humans collaborate across the support process, along with the tools involved:

| Workflow Stage | AI Role | Human Role | Tools/Features Involved |

|---|---|---|---|

| Ingestion & Triage | Categorizes intent, tags priority, and detects FCR opportunities. | Reviews novel intents or out-of-distribution signals. | Predictive CSAT, Sentiment Analysis, Intent Tagging. |

| Response Generation | Drafts case summaries and suggested replies based on the KB. | Edits for tone, accuracy, and policy compliance. | Case Summaries, Response Editor, Confidence Gating. |

| Escalation Management | Flags high-risk cases or validator failures. | SMEs resolve complex technical issues. | Escalation Workflows, Policy Engine, SME Queues. |

| Feedback & Learning | Aggregates override data to refine model prompts. | Provides rationale and decision codes for overrides. | Audit Logs, Decision Codes, Retraining Pipeline. |

Triage Reviewers handle quick classifications like approving, rejecting, or escalating cases [7]. Quality Reviewers focus on detailed edits to ensure factual accuracy, completeness, and proper tone [7]. Specialists (SMEs) address high-risk, sensitive, or regulated cases where AI lacks the necessary context or policy understanding [1][7]. A Queue Lead oversees operational health, ensuring SLA compliance, managing staff, handling backlogs, and conducting reviewer calibration sessions [7].

"HITL is an improvement loop: decisions become signals to improve prompts, validators, routing, evaluation, and training."

– Norbert Sowinski [7]

For high-stakes decisions, adopt a proposer and confirmer model. Here, one person proposes an action, and another confirms it before execution. This two-step process adds a safety layer for decisions with significant financial or customer impact [12]. To minimize delays, design reviewer interfaces to be keyboard-friendly and display compact diffs of changes [12].

Common Challenges and How to Avoid Them

HITL models aim to merge AI’s efficiency with human judgment, but several challenges can disrupt the process. These include automation bias, the "human cleaner" problem, lack of structured feedback, fragmented context, and unclear accountability.

Automation bias is when human reviewers overly trust AI outputs, failing to challenge errors [8][6]. The "human cleaner" problem arises when AI frequently produces messy outputs, forcing human teams to spend their time fixing errors rather than focusing on oversight, ultimately negating the return on investment [6].

One major issue is failing to capture human corrections as structured data. Without proper feedback mechanisms, the AI keeps making the same mistakes because it doesn’t learn from human interventions [7][9]. Another challenge is context fragmentation, where reviewers lack access to vital information like client history, past decisions, or relevant policies. This often leads to inconsistent or improvised choices [9]. Finally, opaque accountability poses risks. In workflows without a clear decision owner, errors can slip through because no one is held responsible for the final output [8][6]. These issues highlight the importance of well-defined roles and robust feedback systems in HITL workflows.

Fixing AI Misinterpretations

AI hallucinations are a growing concern. For instance, GPT-4 has a hallucination rate of 28.6%, which, while better than GPT-3.5‘s 39.6%, still poses risks [2]. In 2024, 47% of enterprise AI users reported making significant business decisions based on hallucinated content, and 39% of AI-powered customer service bots were either withdrawn or reworked due to errors [2].

To combat this, confidence-based routing and automated validators are key. Secondary AI checks can catch schema errors, missing citations, or restricted content before a human even reviews the flagged issue, reducing manual workloads [7].

Using structured decision codes like "INCORRECT", "INCOMPLETE", or "UNSAFE" instead of free-form notes ensures feedback is actionable for prompt adjustments without requiring lengthy explanations [7]. Additionally, maintaining context is crucial – reviewers should have access to the original input, the AI’s interpretation, and the customer’s history within a single workspace [9]. Structured HITL workflows that automatically escalate exceptions to the right staff can further streamline the process and reduce manual reviews.

Balancing Automation and Human Input

Striking the right balance between automation and human oversight is essential. Risk-based allocation helps by assigning low-risk tasks to auto-approval workflows with periodic audits, while high-risk actions require explicit human sign-off [7][6]. For example, sampling just 5% of total outputs or 10% of edge cases for human audits can be more efficient than reviewing every interaction [6].

"The AI handles scale, the human handles irreversibility."

– Murtaza Chowdhury, AI Product Leader, Amazon [8]

Training reviewers to critically evaluate AI outputs is equally important to avoid complacency [8][6]. Tasks should be categorized by decision reversibility – AI can handle reversible tasks with minimal oversight, but irreversible actions, such as account suspensions or financial transactions, should always require human approval [8]. A case in point: Falconi Consulting reduced turnaround times by 40% in early 2026 by using automated workflows with role-based task routing, ensuring AI-flagged issues reached the right human expert immediately [9].

Preventing Escalation Bottlenecks

To keep workflows efficient, it’s critical to prevent delays and ensure timely human intervention. An asynchronous architecture can help by allowing AI agents to post tasks and move on to other work, rather than idling while waiting for human input [1]. A prioritized queue system can further streamline reviews, with lanes such as P0 for safety or legal issues, P1 for customer-facing concerns, and P2 for internal or low-risk tasks [7]. This ensures that the most critical issues are addressed first.

Setting clear SLAs for "time-to-first-review" and "time-to-resolution" can prevent backlogs from growing [7]. Additionally, a kill switch can halt auto-approvals if the rate of errors reaching customers suddenly spikes [7]. Escalations can also be routed based on factors like customer health scoring, deal value, or the potential for irreversible harm [8][13]. Regular calibration sessions among reviewers ensure consistency in applying rubrics, which helps maintain support quality across the board [7][8].

Conclusion

HITL brings a powerful combination of reliability, precision, and scalability to B2B support. By blending AI’s speed with human expertise and empathy, it consistently outshines fully autonomous systems in performance and customer satisfaction [5][1].

The numbers speak for themselves: HITL workflows reach an impressive 99.9% accuracy in document extraction, compared to 92% for AI-only systems, and reduce support costs by 30% [2]. Companies adopting HITL early have reported $3.70 in value for every $1.00 invested, with top performers seeing returns as high as $10.30 [2]. A standout example, Peninsula Visa, cut document processing times by an incredible 93% by allowing AI to handle routine tasks while routing exceptions to human experts [9].

Confidence-based routing further enhances efficiency, enabling AI to manage routine inquiries while reserving human involvement for more complex issues [3]. Meanwhile, structured feedback ensures that human corrections continuously refine AI performance over time [7][9].

These results highlight HITL’s role as a game-changer in modern B2B support.

"The strongest AI systems are not the ones that replace humans. They are the ones that amplify humans while keeping accountability clear." – Shashikant Kalsha, CEO of Qodequay [6]

For B2B support teams, HITL offers a smart path forward – scaling AI capabilities without sacrificing the quality and personal touch that customers expect.

FAQs

How do I choose the right confidence thresholds for human review?

When setting confidence thresholds in a Human-in-the-Loop (HITL) AI system, it’s essential to weigh the risk of errors against the system’s calibration. Confidence thresholds generally fall between 80% and 95%, depending on how critical the decision is.

- Lower thresholds (around 80%) are better suited for high-stakes decisions where even small uncertainties require human oversight.

- Higher thresholds (closer to 95%) are more appropriate for low-risk tasks, where the system can operate with minimal intervention.

It’s crucial to revisit and fine-tune these thresholds regularly, using actual performance data. This helps maintain the right balance between automation and accuracy over time.

Which support tasks should always require human approval?

Support tasks that involve high stakes, irreversible actions, or sensitive data should always have human approval. For instance, approving large refunds, making high-risk decisions, or managing sensitive information are areas where human oversight is crucial. These tasks often come with regulatory requirements or carry significant consequences, so having a person review them helps ensure both accuracy and accountability.

How can we capture human edits so the AI improves over time?

To make AI better over time, it’s important to set up feedback loops within human-in-the-loop (HITL) workflows. In these setups, human reviewers either correct or approve the AI’s outputs. Their input is then used as training data, which helps fine-tune the AI. This approach not only improves accuracy and reduces mistakes but also allows the AI to adjust to evolving requirements, all while maintaining continuous improvement with consistent human supervision.