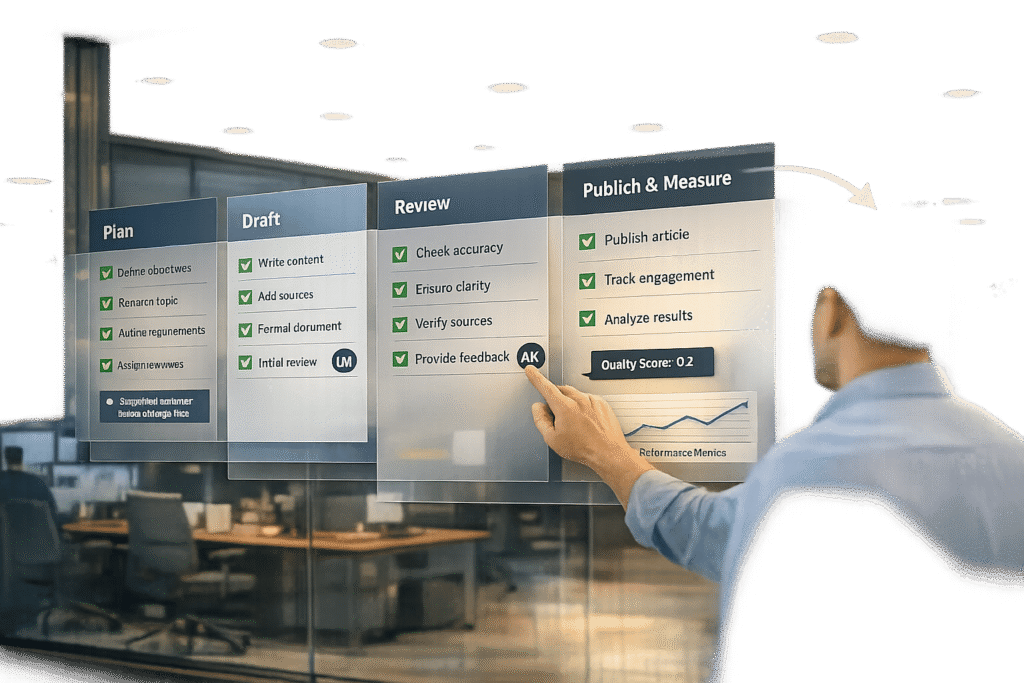

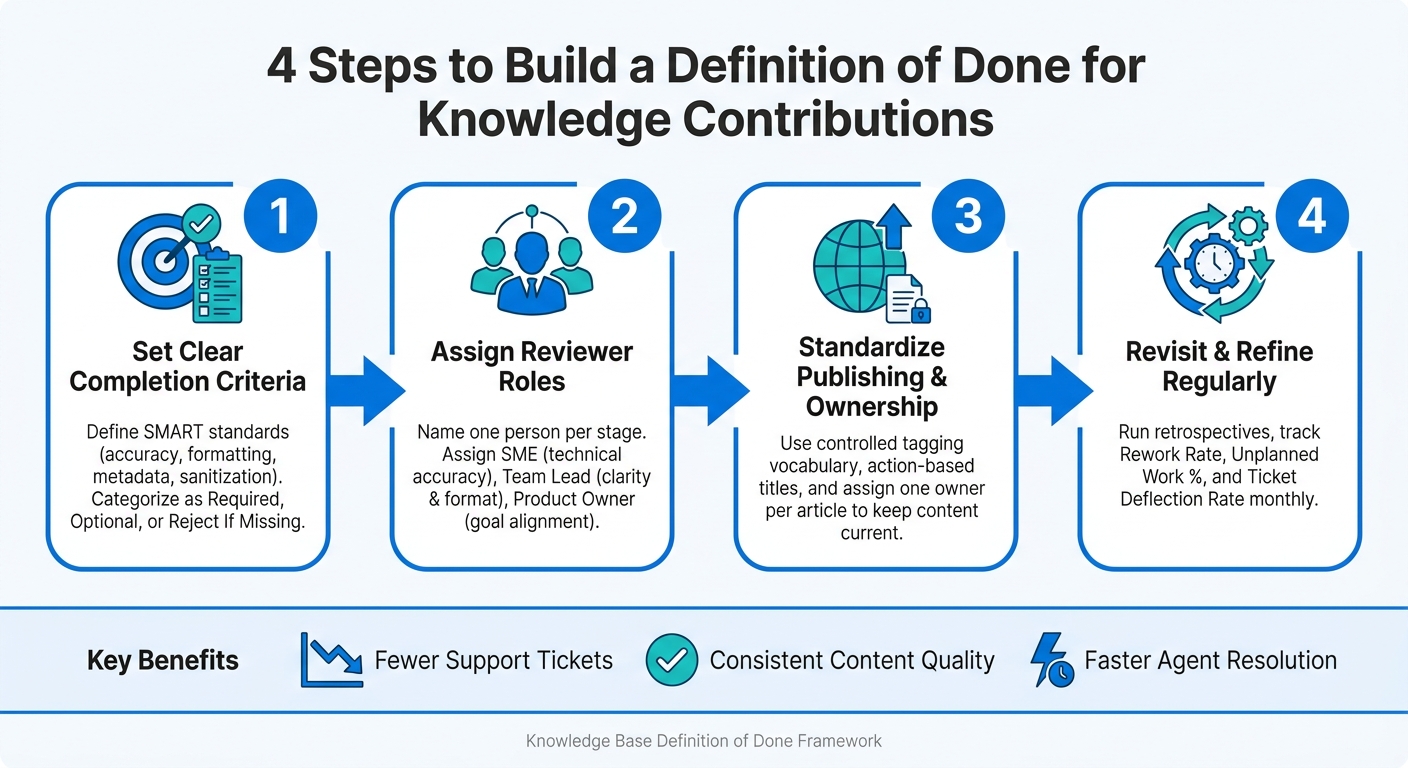

Struggling with inconsistent knowledge base articles? A "Definition of Done" (DoD) can fix that. It’s a checklist that ensures every article meets specific quality standards before being published. This guide explains how to create a DoD for your team to improve content accuracy, consistency, and efficiency. Here’s the process in four steps:

- Set completion criteria: Define clear, specific standards for when an article is considered "done" (e.g., accuracy, formatting, metadata, and privacy checks).

- Assign reviewer roles: Ensure technical reviews, formatting checks, and goal alignment by assigning specific roles.

- Standardize publishing processes: Use templates, improve searchability with consistent tags, and assign ownership for updates.

- Refine regularly: Use feedback, metrics, and retrospectives to adjust your DoD over time.

This framework reduces ticket volumes, keeps content reliable, and boosts team productivity by improving self-service efficiency. Let’s dive into the details.

4-Step Definition of Done for Knowledge Contributions

What is a ‘Definition of Done’ for Knowledge Contributions?

A definition of done (DoD) for knowledge contributions is essentially a shared checklist that defines when a knowledge article is ready for publication. Think of it as a quality checkpoint, ensuring every article meets specific standards before it goes live. This approach ensures consistency and sets clear expectations for your team, creating a foundation for the standards discussed later.

In engineering, "done" typically means the code has been tested, reviewed, and is ready for deployment. For knowledge work, however, the focus shifts to ensuring the content is accurate, easy to find, and genuinely helpful. Derek Huether of ALM Platforms explains:

"The definition of done (DoD) is when all conditions, or acceptance criteria, that a software product must satisfy are met and ready to be accepted by a user, customer, team, or consuming system." [2]

For knowledge contributions, the "consuming system" includes your agents and customers. Here, success is measured by how accurate, searchable, and useful the content is.

A knowledge DoD also includes unique elements, such as removing sensitive customer information like names or emails and crafting titles that reflect terms real customers would use. These steps directly impact whether an article effectively reduces support tickets or gets overlooked.

Key Components of a Knowledge Contribution DoD

A strong knowledge DoD focuses on four critical areas, each addressing potential weaknesses in an article.

| DoD Component | Purpose | Example Criteria |

|---|---|---|

| Content Accuracy | Ensures information is reliable and trusted | SME review completed; all technical steps verified |

| Formatting Consistency | Makes articles easy to follow and scan | Approved template used; headings and steps applied |

| Metadata Standards | Improves article discoverability | Relevant tags added; title framed as a question |

| Sanitization | Protects customer privacy | All PII (names, emails, company details) removed |

In addition to these pillars, accountability plays a key role. Each published article should have a designated owner responsible for keeping it up to date. Without this, even the best-written articles can quickly become outdated and unreliable as your product evolves.

That said, the goal isn’t to create an overly complex checklist that slows your team down. Yves Riel of Okapya warns:

"The DoD is a contract between the product owner and the team, so it’s tempting to want to fit as many items in the DoD as possible in order to ensure the quality of the product. But this can backfire." [2]

Start with the essentials that align with your support objectives – such as reducing ticket resolution time, ensuring accuracy, and improving searchability – and expand from there as needed.

sbb-itb-e60d259

Why Support Teams Need a ‘Definition of Done’

When there’s no shared understanding of what “done” means, team members often interpret it differently. Some may stop after jotting down steps, others might wait for peer reviews, and a few might publish content immediately to close a ticket. The result? A knowledge base filled with inconsistent-quality articles. This not only increases ticket volume but also wastes agents’ time. A Definition of Done (DoD) solves this by setting a universal standard – a clear quality checkpoint that distinguishes unfinished work from content that’s truly ready for customers. With a DoD, only content that meets all specified criteria gets published, ensuring both customers and agents have reliable resources. Without this uniformity, several operational headaches emerge.

Common Challenges Without a DoD

Inconsistent standards create confusion for customers and inefficiencies for support teams. Articles vary in structure, terminology, and formatting, leaving customers with a disjointed experience. Meanwhile, agents spend unnecessary time searching for dependable information instead of focusing on resolving tickets.

This issue also leads to what’s known as knowledge debt – similar to technical debt in software development. Over time, incomplete tags, unverified steps, and unassigned articles pile up. By the time these issues become obvious, fixing them often requires significantly more effort than addressing them correctly from the start.

How a DoD Affects Support Metrics

A well-implemented DoD doesn’t just improve content quality – it also enhances overall support performance. It ensures customers can resolve issues on their own more often, reducing ticket submissions. Agents, on the other hand, benefit from easily accessible, well-organized, and up-to-date articles, enabling them to resolve tickets faster. This leads to better first-contact resolution rates and higher customer experience metrics. In short, a DoD makes your support operations more efficient, consistent, and measurable.

Step 1: Set Clear Completion Criteria for Knowledge Articles

The first step in creating an effective Definition of Done is deciding what makes a knowledge article "complete" before you even start writing. Without this clarity, contributors are left to make their own calls, leading to inconsistent quality. To avoid this, define criteria that are Specific, Measurable, Achievable, Relevant, and Time-bound (SMART), ensuring consistency during reviews [4][3].

Start by selecting a standardized template based on the type of article. For instance, troubleshooting articles often work best with the PERC format (Problem, Environment, Resolution, Cause). On the other hand, FAQs are better suited to a straightforward Question-Answer-Overview structure. Pairing a template with an action-based title that clearly states the article’s purpose can improve search relevance and create a consistent tone across your knowledge base.

Categorize Standards: Required, Optional, and Reject If Missing

To prioritize effectively, break down the criteria into three categories.

| Category | Criteria Examples | Impact on Support Metrics |

|---|---|---|

| Required (Must-Have) | Use of PERC or Q&A template; SME-approved content; action-based title; verified sources; relevant internal/external links | Reduces ticket resolution time by providing structured, accurate information |

| Optional (Nice-to-Have) | Multimedia elements like screenshots, GIFs, or videos; links to advanced or related resources | Enhances self-service success by catering to different learning preferences |

| Reject If Missing | Lack of clear resolution; unordered steps in sequential processes; missing metadata or tags; dense "wall of text" without headings or lists | Avoids high ticket volumes caused by confusing or incomplete documentation |

This system separates essential criteria from enhancements and ensures your standards remain practical and actionable.

Examples of Completion Criteria in Action

A Required criterion could be: "Every troubleshooting article must be approved in writing by a Subject Matter Expert (SME) before publication." Meanwhile, a Reject If Missing rule might state: "Sequential steps must be presented as a numbered list. Articles with unordered steps in a process will be rejected." These guidelines help prevent the release of instructions that are unclear or difficult for customers to follow.

"The DoD ultimately represents a quality check for the team by the team." – Sohrab Salimi, CEO, Agile Academy [5]

To tackle jargon, define or link technical terms inline or direct readers to a central glossary. This approach makes articles accessible to users with varying technical knowledge and reduces follow-up tickets caused by confusion. Start small: a basic set of must-have criteria is easier to enforce consistently. Over time, you can expand the list as recurring quality issues are identified [5].

Once you’ve established clear completion criteria, the next step is to assign reviewer roles to ensure these standards are upheld.

Step 2: Define Reviewer Roles and Approval Steps

Once you’ve established clear criteria, the next step is figuring out who ensures each article meets those standards – and when they do it.

Even the most well-thought-out criteria can fall apart without a clear review process. A vague directive like "engineering will review this" often leads to no one taking responsibility. As Bildad Oyugi, Head of Content at InstantDocs, explains:

"Saying ‘engineering will review this’ means nobody reviews it. Name one person per stage. That person owns the review." [7]

The fix? Assign a specific individual to each review stage. Don’t leave it to a team or department.

Assign Roles and Responsibilities

Define three key roles in the review process:

- A Subject Matter Expert (SME) to verify technical accuracy.

- A team lead or content specialist to ensure clarity and proper formatting.

- A product owner to confirm the content aligns with support goals [1][3].

For each standard, assign a single criteria owner who has the final say in disputes [2].

One practical tip: limit SME reviews to the technical sections. For smaller edits, like fixing typos or updating images, skip the full SME review and route those changes directly to the team lead [7].

Once roles are clearly outlined, simplify the process by leveraging workflow tools.

Use Tools to Manage the Review Workflow

Workflow tools can make the entire process smoother. Automated systems can move articles through stages – from Draft to Published – without the need for constant manual follow-ups [6].

To keep things organized, use clear status labels like "Approved", "Approved with Changes," or "Needs Revision" to signal an article’s readiness for publishing. Stick to a single feedback channel, such as inline comments within the tool, to avoid juggling edits from multiple sources. Finally, keep review checklists concise – about 8 to 12 items per stage – to ensure quality without turning the process into a mindless box-checking exercise [7].

Step 3: Set Standards for Publishing, Tagging, and Ownership

After implementing a thorough review process, the next step is to create consistent publishing standards. This ensures your knowledge base is not only accurate but also easy to navigate and reliable. Without clear standards, even the most comprehensive knowledge base can become chaotic and difficult to use.

Improve Searchability with Consistent Tagging

Tagging often gets overlooked, but it’s a game-changer for searchability. When agents are assisting customers in real time, they’re not leisurely browsing – they’re typing quick queries and expecting precise results. Poor tagging can bury relevant articles, making them hard to find.

The solution? Treat tags like a controlled vocabulary, not a free-for-all. Develop a standard set of tags based on areas like product features, customer types, issue categories, and content formats. Stick to this list, and make it a rule that any new tags must be approved before being added. This avoids the mess of having multiple tags for the same concept.

Titles also play a big role in searchability. Use titles that reflect how customers and agents phrase their questions. For instance, "How do I reset my password?" will outperform something like "Password Management Overview" because it mirrors actual search behavior. Pair this with clear, specific language and place direct answers at the top of your articles to boost both search results and AI retrieval accuracy.

But searchability isn’t the whole story – keeping content relevant requires clear ownership.

Assign Ownership to Keep Articles Current

Without someone responsible for maintaining them, articles quickly become outdated. When this happens, agents lose confidence in the knowledge base, customers hit dead ends, and the entire system becomes more of a problem than a solution.

As DocsBot AI explains:

"A support team scales better when answers live in systems, not in the heads of three reliable people." [8]

To avoid this, assign one owner to each article. This is typically the subject matter expert or team lead most familiar with the topic. Their job isn’t to rewrite the content constantly but to monitor and act on specific triggers. This way, maintenance stays manageable while ensuring nothing falls through the cracks.

Here’s a quick look at common triggers and the actions they require:

| Maintenance Trigger | Action Required |

|---|---|

| Product Release | Update screenshots and labels to reflect new features or interface changes. |

| Support Feedback | Add clarifications or prerequisites based on recurring questions or confusion. |

| Search Log Analysis | Adjust article titles to match the language customers use in their searches. |

| Metric Drop | Review articles with high bounce rates or low engagement to identify gaps or issues. |

Consistent ownership also strengthens your AI-powered tools. Chatbots and AI retrieval systems can only deliver accurate results if the source material is reliable. By keeping articles regularly reviewed and updated, you ensure that your automation tools remain effective and trustworthy.

Step 4: Revisit and Refine the DoD Over Time

A Definition of Done (DoD) isn’t something you set and forget. It’s a dynamic agreement that should adapt as your team evolves, your product develops, and your support needs shift. If it remains static, it will eventually lose touch with how your team operates, leading to gaps that will show up in your metrics.

To keep it relevant, make review cycles a regular part of your team’s routine. Use retrospectives to focus on one crucial question: What slipped through that shouldn’t have? For example, if agents frequently report recurring issues like missing screenshots, unclear instructions, or broken links, it’s a sign your criteria need adjustments.

"A well-defined definition of done also creates consistency across sprints. Each completed task meets the same standard, improving predictability and building confidence in delivery timelines." – Sneha Kanojia, Plane Blog [1]

Gather Feedback from Agents and Customers

Feedback is key to keeping your DoD aligned with reality. Regular evaluation ensures your knowledge base stays accurate and useful. Both agents and customers provide valuable insights, but you need to capture their input systematically.

Agents are your first line of feedback since they interact with the knowledge base daily. They’ll notice when something’s off – like an article that doesn’t address common questions, outdated processes, or tags that lead nowhere. However, this feedback often gets lost in informal channels like Slack or team meetings.

To address this, create a structured process for agents to flag issues. Use dedicated ticket tags or custom fields to mark articles needing review. Assign someone – like the knowledge base (KB) owner – to monitor these flags and prioritize updates weekly. A system like this prevents gaps from quietly accumulating.

Customer behavior also offers critical clues. Look at search queries with no results, high bounce rates on specific articles, and repeated tickets on the same topic. These patterns indicate areas where the knowledge base might not meet your DoD standards. Feed this data directly into your refinement process.

Track Metrics to Measure DoD Effectiveness

While feedback highlights problem areas, metrics show their scale. Together, they provide a clear picture of where to focus your efforts. Three key metrics to monitor are rework rate, unplanned work percentage, and ticket deflection rate.

- Rework Rate: Tracks how often "done" articles require additional work. A rising rate suggests gaps in your criteria.

- Unplanned Work Percentage: Measures the time spent fixing published content instead of creating new material. Avi Siegel, Co-Founder of Momentum, calls this a "creativity tax" [9], as it drains your team’s capacity.

- Ticket Deflection Rate: Indicates whether your knowledge base is effectively reducing support volume. If deflection rates stay flat despite adding new content, it’s a red flag.

| Metric | What It Tells You | Red Flag |

|---|---|---|

| Rework Rate | Frequency of revisiting "done" articles | Articles repeatedly return to "In Progress" |

| Unplanned Work % | Time spent fixing old content vs. creating new | More time patching than building |

| Ticket Deflection Rate | Effectiveness in reducing support tickets | No change in deflection despite updates |

"If your Definition of Done becomes optional under pressure, it was never a definition in the first place. It was just a wish list." – Avi Siegel, Co-Founder, Momentum [9]

Review these metrics monthly and bring the findings to your retrospectives. When both data and agent feedback point to the same issue, don’t wait – update your DoD right away.

Conclusion: Building a Knowledge Contribution Framework That Lasts

A well-defined Definition of Done (DoD) is the cornerstone of producing consistent, high-quality knowledge contributions. It not only cuts down on support tickets but also boosts operational efficiency by streamlining team workflows. With a strong DoD in place, teams can move past debates over whether tasks are "done" and focus on delivering results.

The framework is built on four key principles: clear completion criteria, defined reviewer roles, consistent publishing standards, and regular refinement cycles. These elements work together – completion criteria lose their impact without proper reviews, and ownership without regular updates can lead to outdated content.

A Knowledge Base (KB) owner plays a critical role in keeping content relevant by connecting agent feedback with technical reviews and performance data.

Simplicity is key. A concise DoD with a handful of enforceable criteria is far more useful than an overly detailed checklist that gets ignored when deadlines loom. Start with the basics, establish routines, and expand as your team grows more comfortable. Here’s a quick recap of the essential components of an effective DoD:

| DoD Component | Requirement | Goal |

|---|---|---|

| Title | Action-oriented and clear | Enhance searchability and support user task completion |

| Format | Use templates (e.g., PERC) and lists | Promote consistency and make content easy to scan |

| Review | Approval by a Subject Matter Expert (SME) | Ensure accuracy and build user trust |

| Links | Connect related articles | Offer comprehensive solutions and reduce support inquiries |

| Ownership | Assign a responsible owner/author | Maintain accountability for future updates |

FAQs

What should be included in a knowledge base definition of done?

A solid "definition of done" for a knowledge base ensures that every contribution meets high standards for completeness, accuracy, and usability. Here are the key elements to focus on:

- Thorough content: The entry should fully cover the topic, leaving no important details out.

- Formatting and clarity: Content must follow established guidelines to ensure readability and consistency.

- Reviewed and approved: A reviewer should verify the information for accuracy and reliability before publishing.

- Easy to find and use: Proper tags and keywords should be included to make the content searchable and user-friendly.

- Goal-oriented: Contributions should align with objectives like reducing resolution times and boosting self-service success rates.

By meeting these criteria, each piece of content becomes a valuable and effective part of the knowledge base.

Who must approve an article before it’s published?

When creating an article, it usually requires a green light from the content team. This team often includes key roles like the knowledge base owner, product owner, or even members of the Scrum team. Together, these stakeholders establish the ‘Definition of Done’ – a benchmark that ensures the content is fully complete and meets all necessary criteria before it goes live.

How do we measure if our DoD is working?

To determine if your Definition of Done (DoD) for knowledge contributions is working as intended, focus on whether your articles align with quality and usability standards that support your goals. Key metrics to track include accuracy, completeness, and clarity. Additionally, evaluate how these articles influence outcomes like reducing ticket resolution times and boosting self-service success rates. Regularly reviewing content and gathering feedback from both agents and customers can provide valuable insights into whether your DoD is hitting the mark.