AI-driven QA tools can transform how you coach customer support agents – but only if used thoughtfully. Here’s what you need to know:

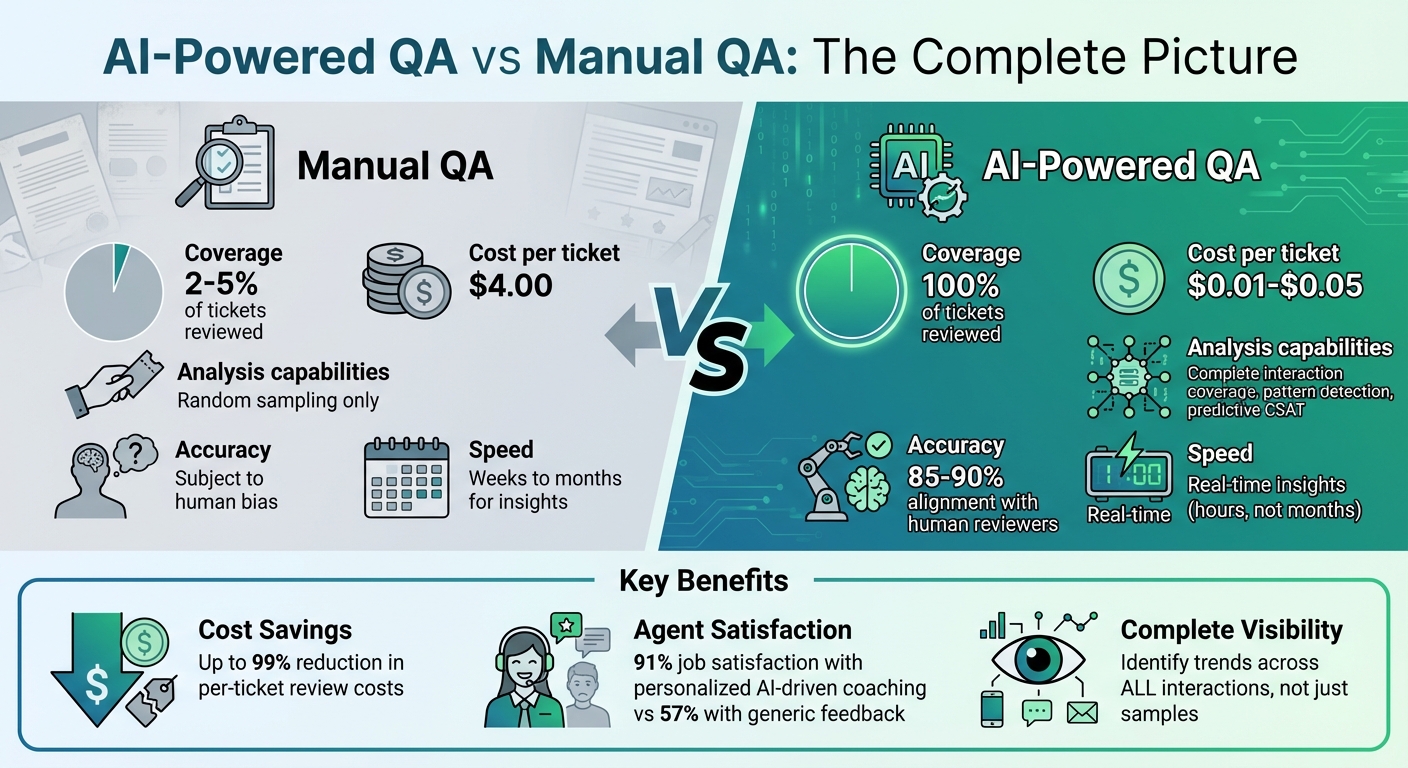

- AI provides 100% visibility into customer interactions, unlike manual QA, which reviews just 2–5%. This allows managers to spot patterns and trends instead of relying on random samples.

- AI tools analyze tone, accuracy, and sentiment with 85–90% accuracy, offering actionable insights like predicting poor CSAT scores before surveys are completed.

- Coaching should focus on growth, not punishment. Use AI data to identify trends (e.g., empathy dips on refund tickets) and provide specific, constructive feedback.

- Cost-effective AI solutions make detailed QA affordable, with automated reviews costing $0.01–$0.05 per ticket compared to $4.00 for manual reviews.

To succeed, use AI to identify learning opportunities, prepare balanced feedback with real examples, and deliver supportive one-on-one coaching sessions. The goal is to create a collaborative environment where agents feel empowered to improve, not micromanaged.

AI-Powered QA vs Manual QA: Coverage, Accuracy, and Cost Comparison

Modern QA for Support: 100% Coverage + Explainable AI Reasoning (AutoQA + Coaching)

sbb-itb-e60d259

Step 1: Collect and Analyze QA Data with AI Tools

To build a coaching program that feels supportive rather than punitive, the first step is to move beyond random sampling to complete interaction coverage. With AI, you can score 100% of your tickets – whether they’re from email, chat, or phone transcripts – and categorize the data into actionable insights [2]. Let’s break down how AI transforms raw data into meaningful coaching tools.

Using AI for Sentiment Analysis and QA Scoring

Modern AI tools evaluate every customer interaction based on specific criteria such as tone (professionalism and empathy), accuracy (factual correctness), completeness (addressing all customer questions), and process adherence (e.g., following identity verification steps or providing reference numbers) [2][4]. These aren’t just surface-level evaluations – AI can identify subtle issues like passive-aggressive language, condescension, or a lack of empathy with 85–90% alignment compared to human reviewers [2].

One standout feature is predictive CSAT. Instead of waiting for survey results, AI can analyze a ticket and predict whether the customer will likely be satisfied. This allows you to address low-scoring tickets immediately, potentially preventing negative feedback from ever occurring [2]. For example, tools like Supportbench offer real-time predictive CSAT and sentiment analysis, helping managers resolve issues while they’re still fresh.

But scoring alone isn’t enough – organizing and centralizing this data is just as important.

Organizing and Centralizing QA Data

AI doesn’t just score tickets; it also organizes the data and flags anomalies. For instance, it can highlight tickets with unusually low scores, conversations where sentiment dropped halfway through, or cases where high CSAT scores conflict with poor process adherence. It also uncovers trends, like refund-related tickets consistently scoring lower on empathy [2].

To make this process seamless, your AI tools should integrate directly with your helpdesk. Supportbench, for example, connects to your support platform to process all tickets closed in the last 24 hours. It pulls in full message threads, metadata, tags, and CSAT scores, ensuring your QA data is always up-to-date and accessible [4]. This automation not only saves time but also reduces costs compared to manual reviews.

Another key step is defining your QA rubrics in plain language. Instead of complex coding, you can create straightforward instructions like "Score 1 if the agent used the customer’s name" or "Flag tickets where the customer’s sentiment worsened during the conversation." The AI then applies these rules consistently across all tickets [2][4]. To ensure accuracy, calibrate the AI by testing it against 200 tickets already scored by humans. Adjust the rubric until the AI achieves over 80% agreement with human scores [2].

Step 2: Identify Coaching Opportunities from Trends

AI-powered QA shines when it comes to identifying patterns across interactions. Unlike traditional QA, which only samples a small percentage of tickets, AI offers full coverage. This allows you to uncover trends that highlight where coaching can make the biggest difference.

Instead of focusing only on overall scores, dive into specific metrics. For instance, you might notice that refund-related tickets score 20% lower in accuracy – perhaps due to a misunderstood policy change. Or maybe tone scores drop by 15% on Tuesday afternoons, hinting at agent fatigue after a busy start to the week [2]. These patterns help you move away from generic feedback and deliver precise, actionable coaching.

It’s equally important to separate individual challenges from broader, systemic issues. For example, a 25% drop in an agent’s monthly scores might signal burnout or a need for additional training. On the other hand, if an entire team struggles with a specific ticket type, the problem could lie in the process or template, not the agents. AI helps you spot these distinctions, ensuring coaching focuses on personal growth while also addressing operational inefficiencies.

Additionally, let AI guide your human reviews by flagging anomalies – such as tickets with unusually low scores or sharp declines in customer sentiment. This shifts coaching from a periodic activity to a real-time process, allowing you to address issues within hours rather than waiting months [2].

Step 3: Prepare Balanced Feedback Using Real Examples

Now that you’ve identified trends, it’s time to turn them into actionable feedback. This step involves providing specific, data-backed insights using real examples to guide improvement.

A helpful way to structure feedback is through the SBI Framework – Situation, Behavior, Impact. Start by setting the scene (e.g., "During the March 15th call with customer ID #4782, a billing dispute escalated"). Then, quote the agent’s exact words to highlight the behavior (e.g., "That’s just how our system works") and explain how it missed the mark in addressing the customer’s frustration. Finally, outline the impact, such as how the interaction led to the customer requesting a supervisor. This structured approach ensures clarity, showing both what happened and why it matters.

Framing Feedback Positively

Begin by acknowledging the agent’s strengths. Use QA data to highlight specific successes and demonstrate how these can be applied in other areas. For instance, if an agent excels in handling billing calls but struggles with technical issues, point out effective behaviors – like addressing the customer by name or explaining next steps clearly – and suggest using those skills in broader contexts.

This balanced approach not only improves performance but also boosts morale. In fact, 2024 data shows that 91% of agents who receive personalized AI-driven coaching report job satisfaction, compared to just 57% for those receiving generic feedback [6]. Recognizing positive behaviors alongside areas for growth creates an environment where agents are more likely to embrace feedback.

Using Historical Cases to Provide Context

Concrete examples from past interactions make feedback more relatable. Instead of vague suggestions like "be more empathetic", review a specific case where the agent successfully reassured a frustrated customer (e.g., "I completely understand why this is frustrating. Let me see what I can do to fix this today"). Show how this approach improved the customer experience metrics. Reinforcing successful techniques with real-life examples makes the feedback actionable.

Timing is equally important. Deliver feedback within 24 to 48 hours of the interaction to ensure the context is still fresh. AI tools can identify coachable moments in real time, allowing you to reference specific timestamps and details that help the agent recall the situation. This timely delivery transforms feedback sessions into collaborative discussions rather than critiques. As Srikant Narasimhan, VP of Enterprise Customer Experience at CVS Health, said after adopting AI-powered QA in 2024:

"It gives us that credibility using operational data and scale. We don’t need to ask. We know what’s wrong." [6]

Now that you’ve prepared actionable feedback, the next step is delivering these insights effectively during one-on-one coaching sessions.

Step 4: Deliver One-on-One Coaching Sessions

How you deliver feedback matters just as much as the feedback itself. Start by setting clear guidelines about how QA data will be used. Be upfront: AI insights are NOT there to punish agents for small mistakes or directly affect their pay without human review [1]. This kind of transparency builds trust and reinforces the goal – using AI to help agents grow, not penalize them.

Plan coaching sessions to last between 30 and 60 minutes. Stick to a repeatable structure: review performance using QA data, analyze specific examples together, and agree on measurable action steps. Keep the focus narrow – address one or two behavioral themes at a time. This keeps the session focused and productive.

Creating a Collaborative Environment

A good coaching session isn’t about lecturing – it’s about working together. Involve agents in the process by asking for their input. Which examples do they find most relevant? What language feels natural to them when handling customer concerns? When agents help shape the session, it feels more like teamwork and less like criticism.

Make a clear distinction between "learning signals" and "management signals" to ease anxiety. Explain that QA data is there to help develop skills, not to hand out punishments. Rebekah Carter from CX Today emphasizes:

"Learning signals should never feel punitive" [1]

When agents understand that AI scores are there to identify patterns – not pass judgment – they’re more likely to engage. In fact, research shows that focusing on strengths reduces the chance of disengagement to just 1%, compared to a 22% disengagement rate when weaknesses are the main focus [8].

Avoid tactics like public leaderboards or rankings, which can harm motivation and psychological safety. Instead, focus on individual progress and give agents a clear way to challenge or appeal automated QA scores. This reassures them that human oversight is always part of the process. With trust established, you can adapt your coaching to fit each agent’s needs.

Tailoring Coaching to Individual Agents

Everyone learns differently, so adjust your coaching style to match each agent’s preferences and experience level. For hands-on learners, try side-by-side coaching during live calls to offer real-time feedback. Social learners might benefit more from peer mentoring, where top performers share their techniques in a collaborative setting.

The frequency of coaching should also vary based on experience. New or struggling agents may need quick, focused micro-coaching sessions – just 5 to 10 minutes – to reinforce specific skills. On the other hand, seasoned agents might benefit from monthly deep dives that use conversational analytics to uncover trends in their performance. Establish a consistent schedule with weekly check-ins to maintain momentum and longer reviews for more in-depth growth.

Here’s a quick guide to matching coaching methods with learning styles:

| Coaching Technique | Best For | Key Benefit |

|---|---|---|

| Side-by-Side | New hires/onboarding | Builds confidence and develops skills quickly [3] |

| Micro-coaching | High-volume environments | Reinforces learning with frequent, focused sessions [3] |

| Peer Mentoring | Large teams | Fosters community and leadership opportunities [3] |

| AI-Powered | Identifying trends | Reduces bias and automates performance insights [7][3] |

As Cresta explains:

"When agents see coaching as support rather than surveillance, they engage more and improve faster."

Step 5: Track Progress and Build Continuous Improvement

Coaching doesn’t stop when the session ends. The real challenge is tracking whether agents are actually improving. Without measurable data, it’s impossible to confirm progress or make informed adjustments. This step ensures the coaching process remains active and effective.

Setting Measurable Goals After Coaching

Every coaching session should conclude with clear, specific goals that can be measured. Vague objectives like "improve communication" don’t provide enough direction. Instead, focus on actionable targets backed by data. For example, if an agent struggles with tone on refund tickets, set a goal like: "Increase tone scores on refund interactions from 65% to 80% within two weeks."

Concrete goals work best, especially when they can be tracked with AI-powered QA tools. For instance, you can set binary goals – such as verifying customer identity or providing reference numbers – and measure progress through weekly QA check-ins. These yes-or-no metrics are easy for AI to monitor across all interactions, not just a small sample of 2–5% [2][3].

Using AI to Monitor Improvements

AI tools provide real-time insights into agent performance. Unlike traditional QA methods that evaluate only a small portion of interactions and often weeks after the fact, AI scores every interaction and updates dashboards within hours [2]. This allows you to quickly identify whether coaching strategies are working – or if additional intervention is needed.

Modern AI systems track key metrics like tone, accuracy, query completeness, and adherence to processes [2]. They even serve as predictive tools. For example, if an agent’s QA scores drop on a Monday, the system can flag a potential decline in customer satisfaction (CSAT) scores by midweek. This early warning gives you time to address issues before they escalate [2]. Automated alerts for specific score drops can also highlight areas where agents are struggling to implement what they’ve learned [2].

Here’s a real-world example: In April 2025, a global biopharmaceutical company collaborated with Authenticx to improve human-to-AI agreement scores. By creating a tailored scorecard with specific tagging classifiers and analyzing 300 calls over three weeks, the company boosted agreement scores from 63% to 89%. This 26% improvement enhanced both regulatory compliance and customer satisfaction metrics [9].

Before rolling out AI monitoring fully, ensure proper calibration as outlined in Step 1. Once calibrated, redirect human QA efforts from random sampling to focus on high-stakes or low-scoring interactions flagged by AI [2]. This approach not only ensures comprehensive coverage but also reduces costs significantly – AI reviews cost just $0.01–$0.05 per ticket compared to $4.00 for manual reviews [2]. By continuously evaluating performance through data-driven methods, you reinforce the supportive coaching framework established earlier.

Common Mistakes to Avoid in QA Coaching

When it comes to QA coaching, even the best strategies can fall flat if certain missteps aren’t avoided. These mistakes can drain time, damage trust, and ultimately harm agent morale.

Focusing Too Much on Negative Feedback

If coaching sessions only highlight errors, they can quickly feel like punishment instead of a chance to grow. This approach often leads to a defensive mindset, where agents focus more on hitting metrics than on truly improving their skills. In fact, research shows that 91% of agents who receive personalized, balanced coaching feel satisfied, compared to just 57% who only hear about their mistakes [1][6].

To create a more effective coaching experience, balance feedback by recognizing what agents are doing well. Highlight specific examples of excellent interactions alongside areas for improvement. And when using AI-generated scores, don’t treat them as the final word. Instead, combine the data with meaningful conversations to uncover root causes and reinforce positive behaviors. As Rebekah Carter from CX Today explains:

"AI coaching tools should scale visibility, not authority" [1]

Overlooking System-Level Issues in QA Data

Another common pitfall is blaming agents for problems that might actually stem from flawed systems. For instance, if QA data reveals low scores for specific ticket types – like refund requests or technical issues – it’s tempting to coach the agent. But the real problem could be outdated templates, unclear processes, or conflicting documentation [10]. If multiple agents struggle with the same type of interaction, that’s a strong signal of a system-wide issue rather than an individual shortcoming.

AI-powered QA tools can help by analyzing 100% of interactions, offering a broader view than traditional methods that only sample 2–5% [1][2]. For example, if billing inquiries suddenly show a drop in accuracy scores, don’t rush to retrain your agents. First, check if a recent policy or template change is causing confusion. As Rebekah Carter points out:

"The real upside of AI QA insights… [is they] let teams stop arguing about individual calls and start fixing what keeps breaking" [1]

Failing to address systemic issues can lead to what’s known as "apology loops", where agents repeatedly apologize for problems beyond their control. This not only drains their empathy but also makes coaching feel unfair. Before jumping into a coaching session over low scores, take a step back to assess whether internal processes or documentation might be at fault. Fix the system first – then focus on coaching the people.

AI-Driven Tools for Effective QA Coaching

When it comes to improving QA coaching, AI tools are changing the game. They take the guesswork out of the process, replacing random manual reviews with data-driven precision. Instead of analyzing just 2–5% of customer interactions, modern platforms can evaluate every single one – whether it’s through email, chat, or voice. This ensures that coaching efforts focus on the areas that will make the biggest difference [1][2].

Using AI for Automated QA Insights

AI-powered QA systems analyze customer interactions in real time, scoring them on factors like tone, process adherence, accuracy, and completeness – all in one go. These systems provide consistent, data-backed evaluations, eliminating the subjective biases that often come with manual reviews.

For example, Supportbench offers features like automated ticket summaries and sentiment analysis. These tools give coaches instant insights without the need to sift through lengthy case histories. If an agent’s tone dips during refund requests or sentiment shifts mid-conversation, the system flags these moments immediately. This means you can zero in on specific issues rather than spending time combing through transcripts.

AI scoring also brings cost efficiency. Unlike human reviewers who only examine a small sample of interactions, AI can uncover patterns across the board. For instance, it might detect that tickets handled on Tuesday afternoons consistently score 15% lower in tone. These kinds of insights are impossible to catch with limited manual sampling [2].

By automating these evaluations, AI frees up time and resources, paving the way for more focused, actionable coaching.

Streamlining Feedback with AI Copilots

AI doesn’t just identify problems – it helps solve them. AI copilots turn raw data into meaningful coaching opportunities, cutting preparation time from hours to minutes. Take Supportbench’s AI Agent-Copilot, for example. It pulls together historical cases, internal guidelines, and external knowledge bases to provide tailored suggestions. Instead of manually hunting for a specific refund interaction, the copilot gives you a concise summary, highlighting what worked well and what didn’t.

These tools also offer predictive capabilities. They can estimate CSAT (Customer Satisfaction) and CES (Customer Effort Score) before survey results even come in [5]. If the system predicts a low CSAT score based on tone or completeness, you can step in with proactive coaching to address the issue before it escalates.

Quality stops being a quarterly report and becomes a daily dashboard. You spot problems in hours, not months.

The real power of AI lies in its ability to handle repetitive tasks, like checking if an agent used the customer’s name or included a reference number. This lets human coaches focus on deeper, more nuanced areas – like building empathy, navigating judgment calls, and strengthening relationships [1][2]. By taking care of the heavy lifting, AI transforms coaching into a collaborative effort focused on growth, not just a dull review of spreadsheets.

Conclusion: Building a Culture of Growth with QA Coaching

QA coaching thrives when it’s seen as a source of support, not as a form of surveillance. The trick is to use AI data as a conversation starter rather than a final verdict. When agents understand that the insights are there to help them improve, the tone of coaching shifts from defensive to collaborative. This shift creates the groundwork for structured, productive coaching sessions.

As AI continues to play a larger role in QA, how you implement it makes all the difference. The aim isn’t to tighten control but to expand visibility, allowing managers to coach more effectively and efficiently.

"AI can surface insight at a scale no manager ever could. But growth still happens in conversations, not dashboards." – Rebekah Carter, CX Today [1]

Start by establishing clear boundaries to ensure AI is used as a tool for growth, not as a means of punishment. Bring agents into the process by involving them in shaping coaching themes and feedback language. This collaborative approach transforms coaching into a shared effort, reducing any temptation to manipulate metrics. By doing so, you create a system that evolves alongside your operational needs.

Building on earlier discussions about actionable insights, use AI to address broader challenges. Focus on one or two themes at a time, using patterns identified by AI to tackle systemic issues like outdated workflows, broken processes, or unclear templates. This method turns QA data into a resource for continuous improvement, boosting both individual performance and overall team morale.

FAQs

How do I make AI QA feel fair to agents?

Creating a sense of fairness in AI-driven quality assurance (QA) starts with transparent and consistent practices. Begin by calibrating QA scoring using standardized criteria, paired with regular alignment sessions to ensure everyone is on the same page.

When implementing AI, it’s important to focus on reducing bias. Instead of fixating on scores, leverage AI for actionable insights that help improve performance. Communication also plays a key role – clearly explain the process, provide necessary context, and ensure agents understand the "why" behind the evaluations.

Finally, foster a coaching-first environment. The goal should always be growth and improvement rather than punishment. By combining these approaches, you can create a QA process that feels supportive and motivates agents to do their best without feeling overly critical.

What QA metrics should we coach on first?

When assessing quality assurance (QA), it’s essential to focus on metrics that offer clear, actionable insights. These include:

- Tone: Evaluating professionalism and empathy in interactions.

- Accuracy: Ensuring information provided is correct and reliable.

- Completeness: Confirming all necessary details are addressed.

- Process Adherence: Checking compliance with established workflows.

- Resolution Quality: Measuring how effectively issues are resolved.

AI-powered QA tools can automatically evaluate these areas, giving managers the data they need to identify areas for improvement. To ensure evaluations are fair, it’s important to standardize scoring practices. This can be achieved through tools like standardized scorecards, calibration sessions to align managers, and AI-driven mechanisms to detect potential biases. Starting with these metrics lays the groundwork for constructive coaching and ongoing progress.

How can agents dispute an AI QA score?

Agents have the option to challenge an AI QA score through a clear and straightforward process. This usually starts with submitting a review request, including any relevant context or supporting evidence. Discrepancies are then addressed during calibration sessions with managers. Open communication and a focus on ongoing improvement play a key role in resolving disputes effectively. This approach not only ensures fairness in QA evaluations but also helps agents feel heard and supported.