AI chatbots can save businesses money, but only if they truly solve customer issues. In B2B support, where accuracy and trust are critical, focusing on autonomous resolution – not just deflection – is key. Here’s what you need to know:

- Deflection rate measures how many inquiries are resolved without human agents. Basic chatbots handle 10–30%, while advanced systems can achieve up to 92%.

- Human-handled inquiries cost $4.13–$6.00 each, compared to $0.50–$0.70 for AI interactions.

- Poor deflection (where customer problems remain unresolved) can erode trust and hurt satisfaction.

- The right AI system can cut costs, improve efficiency, and boost satisfaction by handling up to 80% of common issues by 2029.

To succeed, prioritize essential support tools that resolve issues fully, maintain a strong knowledge base, and ensure effective escalation when needed. Balancing cost savings with customer satisfaction is essential.

What Deflection Rates Can You Actually Expect?

AI Chatbot Deflection Rates by Automation Maturity Level

Deflection Rates by Industry and Product Complexity

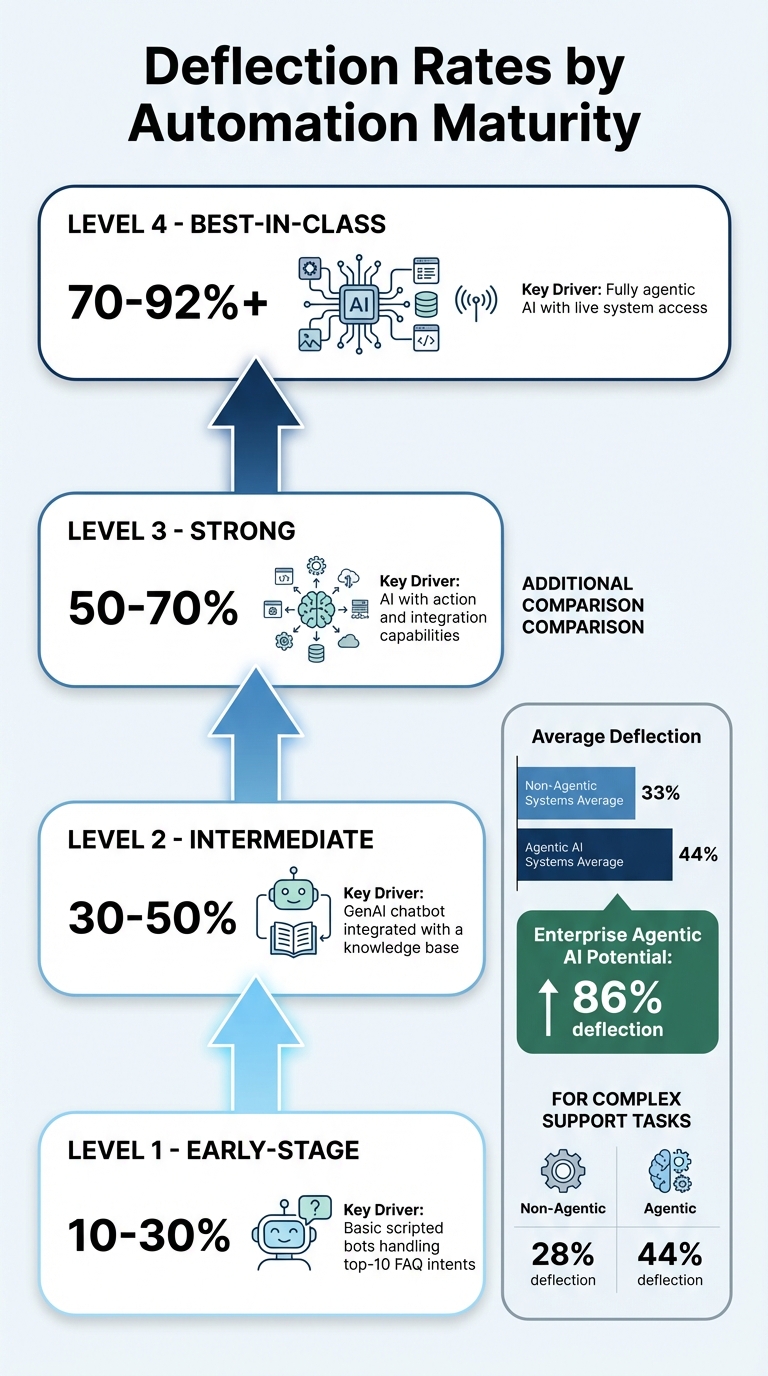

The deflection rates you can achieve depend heavily on the complexity of your product and support setup. For instance, basic FAQ-style chatbots often deflect 10–30% of inquiries. On the other hand, more advanced agentic AI systems – capable of tasks like processing refunds or accessing live data – can achieve deflection rates as high as 70–92% in top-tier deployments [5][10].

Here’s a breakdown of deflection rates by automation maturity:

| Automation Stage | Deflection Rate | Key Drivers |

|---|---|---|

| Early-stage | 10–30% | Basic scripted bots handling top-10 FAQ intents [5] |

| Intermediate | 30–50% | GenAI chatbot integrated with a knowledge base [5] |

| Strong | 50–70% | AI with action and integration capabilities [5] |

| Best-in-class | 70–92%+ | Fully agentic AI with live system access [5] |

Non-agentic systems typically average a 33% deflection rate, while agentic AI systems average 44%, with potential to hit 86% in enterprise settings [5][10]. The difference becomes even more pronounced with complex support tasks: non-agentic systems manage only 28% deflection, whereas agentic systems reach 44% [10]. These numbers highlight how choosing the right system can significantly impact both cost savings and customer satisfaction.

In technical B2B support, deflection metrics often take a backseat. Matthew Plotkin, GTM Leader at Inkeep, explains:

"Deflection is a weak main KPI for technical support. The real metric is time to first useful response" [7].

For products requiring in-depth troubleshooting, AI is more effective in automating data collection – like logs, version numbers, and configuration details – than in resolving issues entirely.

While these benchmarks provide a starting point, real-world AI deployments are constantly evolving, pushing deflection rates higher over time.

How Deflection Rates Improve Over Time

Deflection rates don’t peak at launch – they improve as AI systems learn from real-world interactions. For example, between October and March 2024–2025, Forma used Forethought Solve to increase its deflection rate from 30% to 39% by refining its ability to handle routine inquiries for over 13,800 users [11].

Grammarly offers another compelling example. After implementing agentic AI, its deflection rate started at 60% and soared to 87% within just 10 days. By integrating the AI with internal systems, they added another 5–10% boost, enabling the system to take meaningful actions [10].

This improvement comes from continuous learning. AI systems analyze escalated tickets, update training data, and refine their responses, effectively turning support agents into AI trainers and managers , often supported by AI agent copilots that surface real-time insights [3][2][9].

What Affects Your Deflection Rate

Several factors determine whether you hit the lower or upper end of these benchmarks:

- Knowledge base quality: AI trained on thorough documentation can achieve a 96% success rate [6].

- Product complexity: Simple tasks like password resets are easy to deflect, while multi-step troubleshooting often requires human intervention.

- Customer expectations: Speed matters – 90% of customers expect an immediate response [11]. However, rushing to deflect without resolving the issue can create “false deflection,” eroding trust [3].

The type of AI deployed also plays a critical role. Companies using basic, non-agentic AI report flat or worsening costs per resolution in 62% of cases because unresolved tickets still require human input [10]. For mid-volume companies handling 5,000–10,000 tickets monthly, costs average $18 per resolution with non-agentic AI but drop to $15 with agentic systems [10].

Regular optimization is essential to maintain and improve deflection rates. Use analytics to identify gaps, such as search queries returning no results or frequently escalated topics. Then, prioritize creating content to address these gaps [1][3]. Additionally, update AI training data before major product launches or seasonal spikes to handle surges in specific inquiries [2].

sbb-itb-e60d259

How to Improve Your Deflection Rates

Build a Better Knowledge Base

A solid knowledge base is the backbone of effective AI deflection. Without well-crafted content, AI tools struggle to resolve customer issues. The focus should shift from simply deflecting tickets to creating content that genuinely solves problems.

Start by identifying gaps in your knowledge base through AI analytics. Escalations can act as a diagnostic tool, revealing areas where content needs to be created or updated [3][7]. Matthew Plotkin, GTM Leader at Inkeep, emphasizes this approach:

"The best support orgs treat solved work as reusable knowledge… capture knowledge while solving and improve it over time so solutions are easy to find later" [7].

For technical support, ensure your documentation includes detailed context such as environment versions, configurations, and logs. This helps Tier 2/3 agents resolve escalated issues more efficiently [7]. Enhance your articles with visuals like screenshots, step-by-step guides, and short videos. These additions empower users to diagnose root causes instead of just addressing symptoms [12][8].

AI systems use semantic understanding to process your entire knowledge base, recognizing synonyms, paraphrasing, and intent regardless of how content is structured. However, Gartner reports that only 14% of support issues are resolved through self-service [13], highlighting the importance of creating high-quality, resolution-focused content.

A well-built knowledge base also lays the groundwork for deploying AI chatbots that can go beyond answering questions to taking actionable steps.

Use AI Chatbots for Intelligent Deflection

Modern AI chatbots should do more than just answer queries – they should handle transactions like checking order statuses, processing refunds, and updating payment details through CRM/API integrations. This capability can significantly improve deflection rates, with mature implementations often achieving rates between 60% and 80% [2][4].

Emma App, a fintech platform, illustrates this approach. By using the Crisp AI Chatbot to manage weekend and overnight inquiries, they achieved three times faster resolution times and increased conversation volume by 127% – from 3,500 to 7,200 monthly conversations – all without expanding their support team [2]. Geoffrey Safar, Head of Operations at Emma, explains:

"It’s not about replacing support. It’s about keeping customers cared for – even when no one’s online" [2].

AI-powered ticket routing based on confidence levels is another critical feature. When AI is uncertain, it should ask clarifying questions or refine its search instead of providing generic or inaccurate responses [8][4]. This is essential given that general-purpose language models can "hallucinate" in 58–82% of legal queries, while even domain-specific tools have error rates of 17–34% [4].

Before going live, test your AI in simulation mode using historical ticket data. Vertical Insure used this method to predict resolution rates and pinpoint potential issues without affecting customer satisfaction. The result? They achieved 60–70% automation with zero customer-facing errors [4].

When AI uncertainty remains, having a smart escalation process ensures unresolved issues are handled effectively.

Set Up Smart Escalation and Routing

A seamless escalation process is vital for maintaining customer satisfaction. When AI escalates a ticket, human agents should receive all relevant details, including the conversation history, customer environment, and troubleshooting steps already attempted [4][7]. This prevents customers from having to repeat themselves, which can be a major source of frustration.

Incorporate sentiment-based routing to detect customer frustration and prioritize escalations. For example, set rules to flag phrases like "still not working" for immediate human intervention. This is crucial, as 75% of customers report that prompt AI responses often fail to meet their needs [13].

Kickfin, a platform for cashless tip-outs, provides a great example. By using Forethought Solve to handle repetitive queries, they deflected over 2,000 tickets and achieved a 72% self-serve rate. This allowed their small support team to focus on more complex issues [11].

Regularly review the quality of escalations to ensure your triage system is working. Check if handoffs are effective or if customers return with the same issue within 24–48 hours. As Stevia Putri, Marketing Generalist at eesel AI, puts it:

"The real goal isn’t just deflection; it’s resolution. You need to know if your AI is actually solving problems, and how that compares to your traditional help center articles" [3].

How to Measure Deflection Success

Key Metrics to Track

Measuring deflection success involves keeping tabs on metrics that balance operational efficiency with customer satisfaction. These measurements help ensure that your deflection strategies are working without sacrificing the quality of the customer experience.

One of the most important metrics is resolution rate, which tracks the percentage of inquiries resolved without involving a human agent. This metric distinguishes between actual problem-solving and cases where customers simply abandon their inquiries out of frustration [3]. Similarly, the escalation rate is critical – it shows how often AI transfers conversations to human agents, providing insights into the system’s effectiveness [3].

For B2B technical support, Time to First Useful Response (TFUR) is a must-watch metric. This measures how quickly a customer receives a response that includes actionable information, not just a generic acknowledgment. In technical support scenarios, this metric can make or break the customer experience [7].

Another key metric is cost per resolution, which offers a clearer financial picture than cost per ticket. For example, in early 2025, 62% of companies using non-agentic AI systems reported flat or worsening costs per resolution because "deflected" tickets still required human intervention. In contrast, agentic AI systems reduced the cost per resolution to around $15, compared to $18 with non-agentic tools [10].

When it comes to Tier 2 and Tier 3 support, tracking context gathering efficiency is vital. This measures the AI’s ability to automatically collect relevant details – like logs and configurations – before a human engineer steps in to debug the issue [7]. It’s also important to separate metrics for Tier 1 (self-service coverage) from Tier 2/3 support (average handle time and TFUR) to avoid masking rising costs in complex technical support scenarios [7].

Next, let’s examine common pitfalls that can distort these metrics.

Common Mistakes in Measuring Deflection

One frequent mistake is treating deflection as a standalone success metric. High deflection rates can be misleading if they reflect unresolved issues or frustrated customers who gave up trying to contact support. For example, hiding the "contact us" button to artificially boost deflection numbers can backfire, leading to higher customer churn and long-term damage to the business [3].

A related issue is false deflection, where customers abandon interactions without a resolution. This often happens when support options are hidden, leaving customers feeling stranded [3]. The real goal should be resolution, not just a high deflection percentage.

Another common error is neglecting to track repeat contact rates. This metric reveals whether customers who used AI or self-service tools end up reopening tickets for the same issue within 24–48 hours. Relying solely on metrics like session timeouts or conversation closures can also be misleading; these only show that the interaction ended, not that the problem was solved [4].

With these challenges in mind, let’s explore how feedback can drive continuous improvement.

Use Feedback to Improve Performance

Customer feedback is a powerful tool for distinguishing between true resolution and false deflection. Simple prompts like "Was this helpful?" or "Did this solve your problem?" at the end of AI interactions can provide valuable insights into whether the customer’s issue was actually resolved [3].

Regular conversation audits are also essential. These audits help ensure that the AI is providing accurate and complete responses rather than prematurely closing interactions [4]. Metrics like high fallback rates – where the AI fails to understand queries – or frequent escalations can highlight gaps in the knowledge base or areas where the AI needs further training [2].

Feedback loops also play a critical role in refining your knowledge base. For example, when a ticket is eventually resolved by a human, reviewing whether the solution exists in your knowledge base can reveal opportunities to improve AI training [7]. Some organizations even use AI to identify new issue resolutions and automatically draft documentation, making similar future inquiries self-service by default [7].

Finally, track customer satisfaction (CSAT) alongside repeat contact rates for a complete picture of performance. For instance, agentic AI systems have been shown to improve CSAT by 64% while also achieving higher deflection rates (44% versus 33%) [10]. However, it’s important to pair CSAT scores with metrics like issue resolution and repeat contact rates to ensure that satisfaction aligns with actual problem-solving.

Conclusion

Deflection rates only matter if they actually solve customer problems. The real measure of success isn’t just keeping tickets away from support teams – it’s ensuring issues are resolved effectively. High deflection stats lose their meaning if customers give up in frustration or return with the same problem within 24–48 hours.

For B2B leaders, the focus should be on autonomous resolution, not just deflection. Metrics like repeat contact rates within 24–48 hours and Time to First Useful Response are better indicators of success. These numbers should lead to actionable steps. For more complex Tier 2/3 issues, human expertise is still essential. However, AI can speed up the process by collecting essential context – like logs, software versions, or configurations – before agents step in, saving valuable time [7].

Pair deflection metrics with quality indicators like CSAT (Customer Satisfaction Score) and resolution verification to ensure you’re solving real problems. Keep the "Contact Us" button easy to find – hiding it might boost deflection numbers artificially but risks frustrating customers and increasing churn [3]. Instead, use tools like sentiment-based escalation triggers, and ensure AI responses are both verifiable and tied to reliable sources to avoid inaccuracies or "hallucinations" [5].

Balancing operational goals with financial realities is key. Every resolved ticket should become part of a growing knowledge base. When human agents solve new issues, AI can help by drafting documentation updates automatically, turning today’s problems into tomorrow’s self-service answers [7]. Test your AI in simulation mode using past tickets to identify weaknesses and predict how it will perform in real-world scenarios [3].

AI technology has become far more affordable, with costs dropping 280× between November 2022 and October 2024 [5]. Looking ahead to 2029, agentic AI is projected to handle 80% of common support issues [5]. The decision isn’t whether to adopt AI for customer support – it’s about implementing it in a way that drives efficiency while maintaining genuine customer satisfaction. When AI tools are aligned with customer needs, deflection transforms into a powerful way to cut costs and build trust.

FAQs

What’s the difference between deflection and true resolution?

Deflection refers to the percentage of customer inquiries that AI manages without requiring human assistance. On the other hand, true resolution is all about ensuring the issue is fully and accurately resolved – whether it’s handled by AI or a human agent. While deflection aims to minimize human involvement, true resolution emphasizes solving the problem entirely.

How do I calculate deflection without counting “false deflection”?

To measure deflection accurately – without counting false deflection – you need to focus on cases that are genuinely resolved using AI or self-service tools. Avoid including instances where issues are marked as resolved but still require human involvement later. To ensure accuracy, track follow-up metrics or gather customer feedback to confirm whether the resolution was effective. This approach ensures your deflection rate represents actual efficiency improvements, counting only those resolutions that are fully completed.

What’s a realistic first-quarter deflection target for my B2B support team?

A practical goal for first-quarter deflection rates in a B2B support team usually falls between 20% and 60%. Hitting the higher end of this range often relies on factors like effective AI integration and streamlined workflows. While some claim that AI platforms can achieve rates closer to 60%, the actual outcomes heavily depend on how well the system is configured and executed.