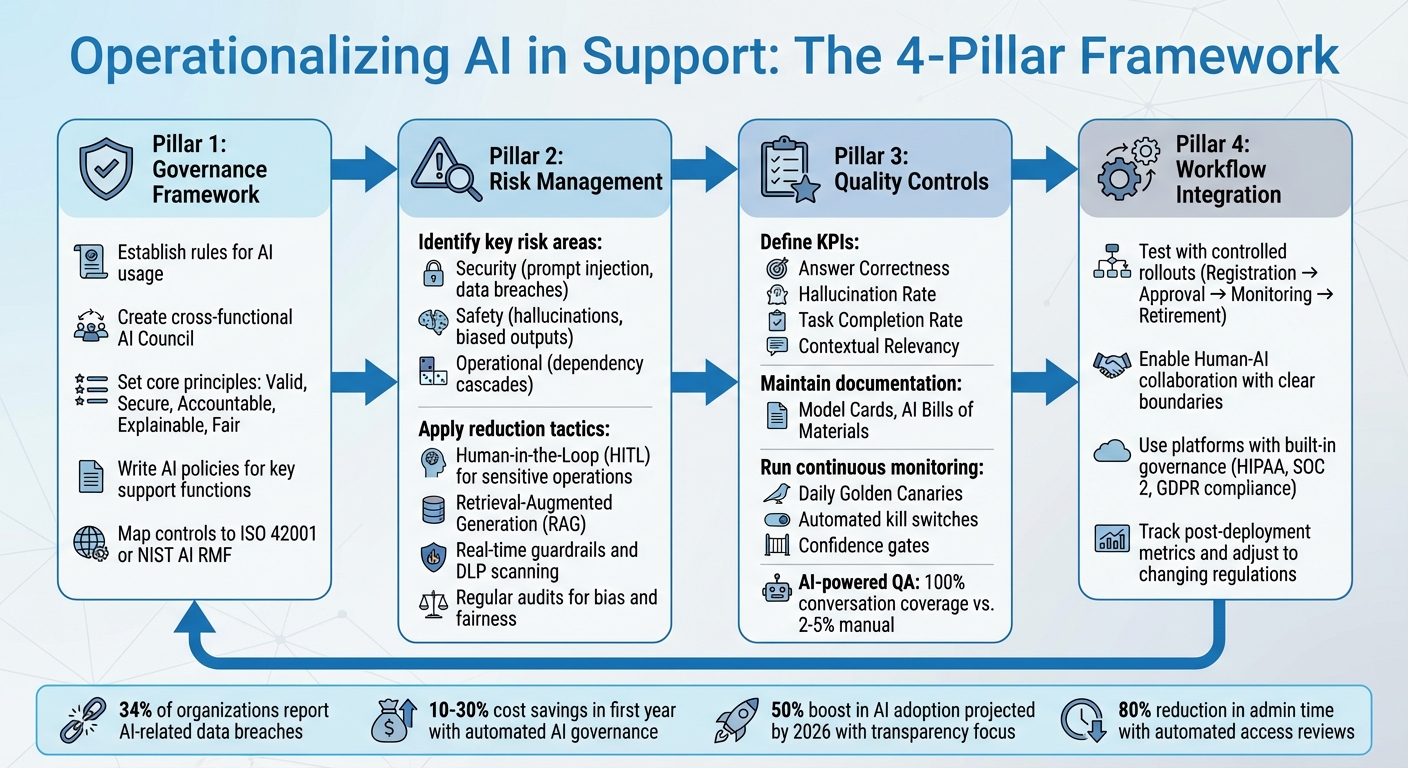

Operationalizing AI in customer support requires clear governance, robust risk management, and effective quality controls. Without these, you risk compliance violations, financial penalties, and damage to client relationships. Here’s the process in simple terms:

- Governance Framework: Establish rules for AI usage, ensuring systems are accountable, explainable, and aligned with business goals. Create a cross-functional team to oversee AI policies and compliance.

- Risk Management: Identify risks like security breaches, biased outputs, or inaccurate chatbot responses. Use safeguards like human oversight, data anonymization, and regular audits to minimize these issues.

- Quality Controls: Monitor AI outputs with key performance indicators (KPIs) like accuracy, relevance, and response time. Maintain detailed documentation and conduct regular audits to ensure consistency.

- Integration into Workflows: Embed governance and risk controls into daily operations, using automated tools and human-AI collaboration to balance efficiency with safety.

AI Governance Framework: 4-Stage Implementation Process for Customer Support

The AI Governance Playbook: Build Trust, Manage Risk, and Scale Responsibly

sbb-itb-e60d259

Building an AI Governance Framework for Customer Support

A governance framework is the backbone of managing AI in customer support. Without it, you’re essentially running AI tools without clear rules or accountability. This framework should seamlessly integrate into your daily operations, ensuring AI is managed responsibly and effectively.

The first step? Establish clear governance principles to guide every AI-related decision.

Set Core Governance Principles

Define the essential rules that will shape how AI operates in your support system. These principles should align with what the NIST AI RMF describes as "trustworthy AI" – systems that are valid, secure, accountable, explainable, and fair. In practice, this means keeping humans in control of critical decisions, reducing bias in automated responses, being upfront about customer data usage, and setting clear lines of accountability for any issues.

Your principles should also support key business goals, such as improving customer retention and delivering high-quality service, rather than just ticking off regulatory boxes. For example, if your team works with healthcare clients, your rules should strictly prohibit uploading Protected Health Information (PHI) into unapproved AI tools. To stay ahead of evolving regulations, map your internal controls to established standards like ISO 42001 or NIST AI RMF.

Create a Cross-Functional Governance Team

AI governance isn’t just an IT issue – it requires input from multiple areas of expertise. Form a cross-functional team, often called an AI Council or AI Center of Excellence, that includes leaders from support, legal, security, IT, and data management. Each member brings a unique perspective: support leaders focus on customer impact, legal experts ensure compliance with regulations like GDPR or the EU AI Act, security teams manage access controls, and data specialists monitor for bias.

For example, in 2023, ServiceNow launched a Digital Technology AI Council led by Walid Sleiman, Senior Director of Internal AI Governance. This council united developers, architects, and compliance professionals to create an AI System Development Lifecycle (AI-SDLC) with 44 specific control objectives embedded into their development tools. Their "Compliant by Design" approach automatically checked all AI projects against standards like NIST AI RMF and ISO-42001.

"Bringing everyone onboard (the village), getting the right sponsorship from our leaders, and aligning early on clear roles & responsibilities… was essential to getting this governance structure off to a successful start." – Walid Sleiman, Senior Director, Internal AI Governance, ServiceNow

The goal of this team isn’t to stifle innovation but to enable safe and scalable AI usage by setting clear rules from the start. Make sure to appoint an executive sponsor to give the team authority and recruit AI "champions" across departments to address ethical concerns specific to their areas.

This team will also play a critical role in developing targeted AI policies.

Write AI Policies for Key Support Functions

B2B customer support requires tailored AI policies for specific tasks like case management, updating AI-powered knowledge bases, and assisting agents. For example, you might allow AI to draft internal case summaries but require a human to review and approve any AI-generated content before sharing it with customers.

Classify tasks into categories such as acceptable (e.g., creating response templates, analyzing ticket trends) or high-risk (e.g., uploading customer PII, using AI outputs for contract decisions without review). Policies should clearly outline which data types need anonymization and explicitly ban regulated data like PII or PHI from being entered into unvetted tools. With 90% of an organization’s data being unstructured, and much of it powering GenAI applications like chatbots, strong data input safeguards are critical.

Identifying and Reducing Risks in AI-Powered Support

Once governance is in place, the next step is to pinpoint potential AI failure points in support operations and map out strategies to address them. These initial efforts lay the groundwork for implementing practical risk management measures.

Identify Key Risk Areas

AI risks in customer support typically fall into two categories: security (e.g., prompt injection attacks, data breaches) and safety (e.g., unintended outputs like false information, offensive content, or reputational damage). One of the most pressing issues is AI hallucinations – when the system generates incorrect or fabricated information. These can take the form of "unjustified misinformation" (completely made-up details) or "justified misinformation" (errors based on flawed knowledge). Other challenges include vulnerabilities that may expose sensitive data or biased ticket routing that can degrade service quality. On top of these, operational risks such as dependency cascades – where a single failure in one system disrupts others – must also be addressed.

For example, in 2024, an international consulting firm had to refund about AU$440,000 to the Australian government after delivering a report riddled with inaccuracies due to poor AI oversight. This case highlights how AI errors can lead to both financial losses and reputational setbacks.

To catch risks early, conduct a thorough use case risk assessment. Evaluate each AI workload against criteria like fairness, reliability, and transparency. Automated tools can help maintain a detailed inventory of AI systems, while techniques like red teaming – using adversarial tools like PyRIT – can expose vulnerabilities such as prompt injection before they escalate into larger problems.

Apply Risk Reduction Tactics

To keep risks in check, implement targeted strategies that align with your governance framework, especially for high-value B2B scenarios. Start by limiting AI deployment to narrow, well-defined tasks rather than relying on it for broad, generalized functions. For instance, restricting AI to straightforward tasks like basic account updates can minimize the impact of potential errors. Techniques like Retrieval-Augmented Generation (RAG) can further ground AI responses in up-to-date company policies and knowledge bases, reducing reliance on potentially outdated or incorrect internal data.

For sensitive operations – such as processing refunds or handling high-value accounts – use a Human-in-the-Loop (HITL) approach to ensure oversight. Also, clean and standardize your data sources to maintain consistency, especially with preferred brand terminology.

"Success with generative AI agents depends on a solid understanding of the risks and a clear plan to mitigate and manage them." – ASAPP

Regularly audit for bias and fairness to identify discriminatory patterns in tasks like ticket routing or customer tiering. Deploy real-time guardrails to counter issues like prompt injection, data leaks, or harmful content generation. Automated tools, such as Data Loss Prevention (DLP) systems, can scan prompts for sensitive information before the AI processes them.

| Risk Type | Specific Risk | Reduction Strategy |

|---|---|---|

| Bias | Discriminatory ticket routing or tiering | Regular audits; diverse training datasets |

| Security | Prompt injection; data leaks | Guardrails; DLP scanning; role-based access |

| Inaccuracy | Hallucinations; false information | RAG grounding; layered system architecture |

| Operational | Model drift; performance decay | Continuous monitoring; lifecycle telemetry |

| Compliance | Regulatory violations (e.g., GDPR) | AI-SDLC alignment; automated policy checks |

Many modern platforms now include automated safeguards, making risk management more efficient.

Use AI Features with Built-in Risk Controls

Some platforms, like Supportbench, integrate safeguards directly into their AI features. For instance, its predictive CSAT tool monitors interaction patterns – such as frequent follow-ups or escalating language – to flag potential dissatisfaction, enabling teams to step in before issues escalate. Similarly, dynamic SLAs leverage AI to detect negative sentiment combined with critical keywords, automatically prioritizing tickets so high-value clients receive faster responses.

These embedded controls reduce operational risks by automating quality checks that would otherwise demand constant human intervention. Organizations that adopt automated AI governance have reported cost savings of 10–30% in SaaS and AI expenses within the first year. Automated access reviews for AI tools can also cut administrative time by up to 80%.

"By focusing on grounding responses in reliable data, employing safety mechanisms, and fostering continuous improvement, we can ensure that AI responses are safely grounded and free from harmful hallucinations." – Heather Reed, Product Manager, ASAPP

The ultimate goal is to identify risks early, manage them effectively, and escalate issues for human review when necessary.

Setting Up Quality Control Measures for AI Outputs

Once risks are addressed, the next step is to implement thorough quality control measures to ensure AI systems produce reliable and accurate outputs. This involves setting clear benchmarks, maintaining detailed records, and conducting regular audits to streamline support operations and keep performance consistent over time.

Define Key Performance Indicators (KPIs)

Start by identifying the most relevant metrics to evaluate the quality of your AI outputs. For instance:

- Answer Correctness: Measures factual accuracy.

- Hallucination Rate: Tracks how often the system generates incorrect or fabricated information.

- Task Completion Rate and Tool Correctness: Useful for AI systems managing complex workflows, ensuring API calls and automated actions execute properly.

- Contextual Relevancy: For Retrieval-Augmented Generation (RAG) systems, this metric ensures the correct knowledge base articles are retrieved for each query.

Other critical metrics include Draft Acceptance, which shows how often AI-generated responses are adopted without edits, and Edit Distance, which measures the extent of modifications needed. Pay attention to Abstention/Escalation Rates, where the AI appropriately declines to answer or escalates queries, and Latency (response times at p50/p95/p99 levels) to maintain speed. Additionally, monitor for Data Drift (shifts in customer queries), Performance Drift (accuracy or cost changes), and Safety Drift (increased biased or toxic outputs).

"When you’re evaluating everything, you’re evaluating nothing at all. Too much data != good." – Jeffrey Ip, Cofounder, Confident AI

To avoid drowning in data, stick to the "5 Metric Rule": choose 1-2 metrics tailored to your use case (e.g., AI-driven sentiment analysis for brand voice consistency) and 2-3 general metrics aligned with your system’s architecture (e.g., faithfulness for RAG systems). This targeted approach ensures you focus on what matters most.

Maintain Documentation and Transparency

Thorough documentation is key to ensuring traceability and accountability. For every AI model, prompt, and knowledge base update, maintain detailed records. Use Model Cards to outline intended use, limitations, accuracy benchmarks, and safety considerations. Create AI Bills of Materials that list all models, datasets, libraries, and dependencies, enhancing supply chain security.

When releasing new versions, document everything: data versions, code commit hashes, hyperparameters, and human approvals. This level of detail not only simplifies rollbacks but also supports forensic audits if quality issues arise.

Track data and model lineage to document every step from raw data to the final AI output. This is especially important for meeting regulatory requirements like GDPR and CCPA. Treat operational artifacts – such as prompts, routing rules, and safety filters – as immutable code, tracking them in a central registry.

While manual quality assurance typically covers only 2-5% of interactions, AI-powered QA can assess 100% of conversations. Transparent logging ensures every AI decision is traceable and auditable.

Run Continuous Monitoring and Auditing

Continuous monitoring builds on your governance framework to ensure consistent quality. Monitor the entire lifecycle of requests: inputs, processing, outputs, and outcomes. Use daily Golden Canaries – test cases designed to detect regressions – and automated kill switches to quickly address issues. Implement confidence gates to route low-confidence outputs to human agents for review before delivery. For every AI failure, add it to a bug library as a permanent test case to prevent recurrence in future versions.

In October 2025, a research team led by Cen Mia Zhao and Jeremy Werner introduced an "Agent-in-the-Loop" (AITL) framework for a US-based customer support operation. This system added real-time annotations – such as agent adoption rationales and knowledge relevance checks – directly into workflows. The result? A 14.8% boost in precision@8, an 11.7% improvement in retrieval recall, and an 8.4% increase in generation helpfulness, while reducing retraining cycles from months to weeks.

Regular audits should combine automated checks with periodic reviews by external auditors or unbiased internal teams to catch blind spots. This blend of continuous monitoring and scheduled human oversight ensures your AI maintains high-quality outputs as operations grow.

Adding Governance to AI Support Workflows

Once quality controls are in place, the next step is weaving governance into the fabric of your daily support operations. This isn’t just about policies sitting in a binder – it’s about creating workflows where governance becomes a natural, automated part of how you work. Let’s dive into how you can make this happen.

Test AI Features with Controlled Rollouts

Adopt a structured implementation process with four stages: Registration (defining the purpose and data sources), Approval (ensuring privacy and fairness), Monitoring (keeping an eye on drift and performance), and Retirement when the AI is no longer needed. This lifecycle ensures AI features pass critical checkpoints before they go live.

To refine rollouts, use A/B testing to compare new AI features against established benchmarks. Classify AI systems by risk levels – minimal, limited, high, or prohibited – and apply oversight accordingly. For instance, an AI that auto-tags cases might need minimal testing, but one that handles account modifications should undergo rigorous validation.

Incorporate automated compliance checks into your CI/CD pipeline, so every AI feature must meet safety standards before it’s deployed. Additionally, implement end-to-end observability to catch anomalies like model drift or algorithmic bias during rollouts.

"Put AI in your risk register. No one’s going to argue with that. Get an AI policy. Board should be asking management for a policy."

– Richard Barber, CEO, MindTech Group

Enable Human-AI Collaboration

Clearly define where AI ends and human involvement begins. Assign specific human owners to oversee AI agents and set operational boundaries to ensure AI doesn’t act beyond its intended scope.

Design workflows where AI handles routine tasks but escalates to human agents for complex issues, such as when access is restricted or nuanced B2B scenarios arise. For high-risk actions – like approving refunds or modifying sensitive account data – implement tiered authorization requiring human approval.

Leverage confidence scoring to route uncertain AI outputs to human agents for review before they’re finalized. Build systems with graceful degradation, where AI defaults to an assist-only mode if its confidence dips below a set threshold. Ensure every AI action is traceable by assigning unique identities to AI agents in audit trails.

"With the right oversight, generative AI agents built with robust safety mechanisms create no more risk than humans."

– Heather Reed, Product Manager, ASAPP

This approach shifts human roles from hands-on triage to high-level oversight, focusing on monitoring AI accuracy and handling edge cases. It creates a scalable support system that maintains quality without requiring a proportional increase in staff.

By blending human oversight with built-in platform safeguards, you bridge the gap between operational performance and compliance.

Use Platforms with Built-in AI Governance

AI-native platforms simplify governance by embedding compliance and risk controls right into the system.

For example, platforms like Supportbench come with built-in compliance for HIPAA, SOC 2, and GDPR, reducing the need for manual governance measures. Standard features like automated confidence scoring, centralized logging, and human-in-the-loop workflows eliminate the need for extensive custom development.

Look for platforms offering centralized logging, where all AI and human interactions are recorded in one place. The ability to benchmark and deploy new models (like GPT-5) in less than 24 hours – while keeping decision trails documented and auditable – gives teams flexibility without compromising governance.

With 34% of organizations already reporting at least one AI-related data breach, choosing solutions with built-in governance isn’t just smart – it’s essential. These platforms reduce the risk of AI missteps and make scaling easier by shifting compliance from complex development to simple configuration.

Monitoring, Improving, and Scaling AI Governance

AI governance must keep pace with business growth, evolving regulations, and advancing technology. Even the most robust systems can lose alignment with operational goals if not regularly monitored and refined. Building on your governance framework, the next step ensures continuous alignment and improvement.

Track Post-Deployment Metrics

Effective AI monitoring goes beyond basic uptime checks. You need a system that provides comprehensive visibility into inputs, processing, outputs, and outcomes.

Key areas to monitor include data quality, performance consistency, and safety issues. Address these by prioritizing risks based on severity.

Daily test cases, often referred to as "golden canaries", can help identify regressions in live environments. Additionally, automated kill switches should be in place to quickly shut down systems if critical issues arise.

Collect Feedback for Continuous Improvement

Metrics are essential, but they only tell part of the story. Stakeholder feedback can uncover the real-world impact of AI systems. Set up clear channels for agents and stakeholders to report ethical concerns or compliance issues.

Create feedback loops that involve IT, legal, compliance teams, and end users to refine AI workflows and strategies. For instance, if agents frequently report unnecessary case escalations, it might indicate a need to adjust confidence thresholds, improve training data, or implement dynamic SLAs.

"Governance is a system for improvement – not just a gate."

– Alation

Adopt a "release train" schedule to manage updates effectively. Weekly releases can address urgent changes, while monthly updates can handle more comprehensive refinements. Include non-technical release notes to ensure all stakeholders understand the changes and their implications. This structured approach minimizes the risks of unreviewed, ad hoc updates.

Adjust to Changing Needs and Regulations

AI governance is not a one-and-done effort. Conduct quarterly cross-departmental reviews to ensure policies stay aligned with evolving business requirements and regulatory changes. For high-risk AI systems, perform quarterly assessments; for lower-risk systems, annual reviews may suffice. These reviews should align with your governance principles to maintain compliance and operational efficiency.

The NIST plans a formal review of its AI Risk Management Framework by 2028, incorporating community feedback. Stay proactive by maintaining detailed version logs for models, prompts, and training data. This allows for rollbacks if new deployments introduce bias or errors.

By 2026, organizations that prioritize AI transparency, trust, and security are projected to see a 50% boost in adoption, business outcomes, and user acceptance. Companies that enhance their data culture alongside AI governance could experience performance gains of up to 54%. These efforts aren’t just about compliance – they’re strategic moves that can directly strengthen your competitive edge.

"AI governance is a journey. It is expected to evolve as AI scales and operations grow."

– Informatica

Conclusion

Turning the frameworks and controls discussed earlier into an operational strategy is the final piece of the puzzle.

To embed AI into customer support successfully, organizations need strong governance, risk management, and quality controls. These elements help transform AI from a trial phase into a dependable, long-term asset. Without these safeguards, companies face serious risks like compliance violations, data breaches, and a loss of customer trust. Consider this: 34% of organizations have already reported at least one AI-related data breach, and 40% of technology leaders admit their AI governance programs fall short.

As highlighted in the governance and risk management sections, running AI effectively means establishing clear accountability and creating systems for quick issue resolution. Treating AI systems like products – with defined ownership, roadmaps, and safety measures – ensures that problems are addressed promptly. Transparency and trust are key. By 2026, organizations that prioritize these values could see AI adoption and acceptance increase by 50%.

"Governance is not a burden – it is the foundation on which ethical, scalable, and future-proof AI enterprises are built."

– Adeptiv AI

Supportbench integrates governance into its design from the start, offering tools like confidence scoring, fallback logic, audit trails, and automated quality assurance monitoring. These features lower financial risks and eliminate the heavy engineering workload that 62% of CX leaders say hinders AI adoption.

The companies that succeed with AI won’t be the ones rushing to deploy it – they’ll be the ones that do it responsibly, with transparency and care. Governance acts as the backbone, allowing organizations to scale AI confidently while continuing to innovate. This balanced approach safeguards your customers, reputation, and competitive advantage as your AI systems grow.

FAQs

How can customer support teams safely implement AI while ensuring governance, risk management, and quality control?

To use AI safely in customer support, it’s crucial to have a strong governance framework in place. This framework should cover compliance, reduce risks, and ensure consistent service quality. It involves managing the entire AI lifecycle – right from planning, through deployment, to ongoing monitoring – and assigning clear roles and responsibilities across teams like legal, compliance, and engineering.

Some key steps include creating policies to tackle bias, data security, and accuracy concerns, conducting regular risk assessments, and ensuring transparency by making AI decisions explainable. Ongoing monitoring and audits are essential to catch potential problems early, keeping AI systems dependable and in line with regulations. By focusing on these principles, customer support teams can confidently integrate AI, building trust and maintaining reliable, high-quality service.

How can organizations safely implement AI in customer support while managing risks?

To use AI safely in customer support, it’s crucial to have a strong risk management system in place. Start by performing regular evaluations to spot potential problems, such as bias, data security risks, or inaccuracies in the system. Clear governance policies play a key role – assign specific roles, responsibilities, and accountability to teams like legal, data science, and engineering.

Using transparent and explainable AI helps make decisions easier to understand and review, which can lower the chances of unfair outcomes. Ongoing monitoring is equally important to assess performance, identify bias, and ensure compliance with changing regulations. Combine this with solid data management, robust cybersecurity practices, and teamwork across departments to build a safe and dependable setup for AI-driven customer support.

What are the key quality control measures to ensure accurate and safe AI outputs in customer support?

To maintain reliable and effective AI in customer support, quality control measures are a must. Start by reviewing all customer interactions to spot potential problems and identify areas where your AI could improve. This kind of regular performance monitoring helps catch errors early and keeps your system dependable over time.

It’s also important to implement auditability and safety controls. These allow you to track how AI makes decisions, promoting transparency. Using versioning for datasets and models ensures consistency and makes it easier to roll back changes if something goes wrong. Together, these steps help minimize risks like bias or mistakes, ensuring your AI can handle the complexities of customer support with confidence.