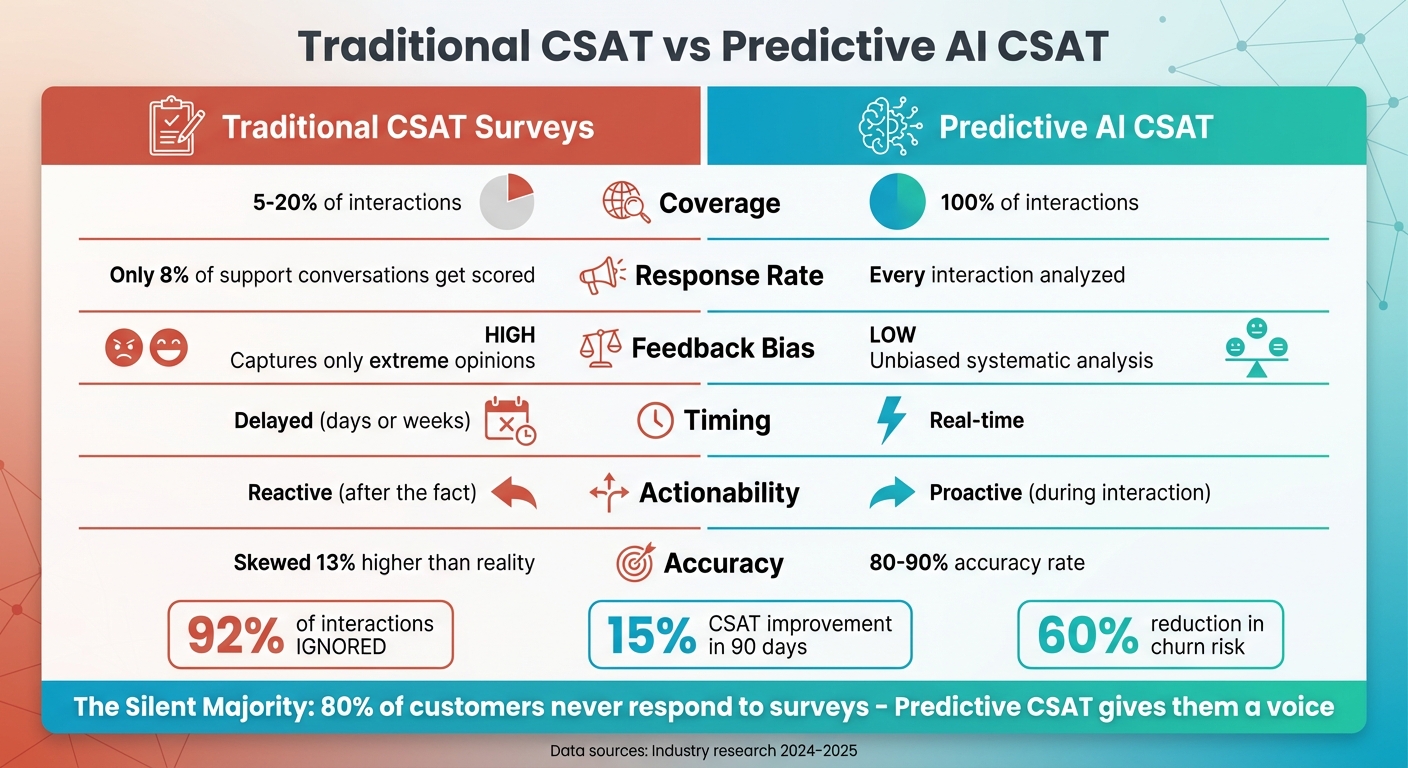

Customer satisfaction surveys miss the mark. They capture feedback from only 20% of interactions, often from customers with extreme opinions. This leaves 80% of conversations unmeasured, creating blind spots in understanding customer sentiment.

Predictive CSAT changes the game. By analyzing 100% of customer interactions with sentiment analysis – emails, chats, calls, and more – AI models estimate satisfaction levels in real time. These models achieve 80-90% accuracy, offering instant insights that help teams act before issues escalate.

Key Points:

- Traditional Surveys: Low response rates (5-20%) and biased results from extreme feedback.

- Predictive CSAT: Real-time satisfaction scores for every interaction, covering all customers.

- Benefits: Early risk detection, reduced churn, and improved coaching for support teams.

This AI-driven approach provides a complete view of customer sentiment, replacing outdated survey methods with actionable insights.

Traditional CSAT vs Predictive AI CSAT Comparison

How to Use AI for Sentiment & Predictive Analysis in Customer Support Tickets (Step-by-Step Guide)

sbb-itb-e60d259

Problems with Traditional CSAT Surveys

Traditional CSAT methods face several challenges, including incomplete real-time insights, low response rates, survey fatigue, and delayed feedback. Response rates for these surveys often fall between 5–20%[1][4][7]. For example, in a study involving 53 B2B and B2C customers, only 8% of support conversations received a CSAT score. This was because surveys were sent out after just 39% of interactions, with a response rate of only 21%[6]. Brian Donohue, VP of Product at Intercom, highlights the issue:

"The metric most customer service teams live by – the one that determines bonuses, drives strategy, and gets presented to the board – ignores 92% of what’s actually happening." [6]

Low Response Rates and Biased Results

One of the biggest issues with traditional CSAT surveys is their tendency to capture responses primarily from customers with extreme opinions – either very positive or very negative – leading to skewed results[1][5]. Surveyed conversations often show satisfaction scores that are 13% higher than non-surveyed ones, demonstrating a clear positive bias[6]. Franka, Director of Customer Support at Intercom, raises a critical question:

"If you are a support leader out there I ask you – what do you think your ‘real’ CSAT would be if you got 100% of responses back? You and I both know it’s not in the 90s as you are presenting every month." [6]

While open-ended questions could provide richer insights, they typically receive fewer responses because customers find them time-consuming. On the other hand, fixed-choice formats, like 1–5 scales, simplify analysis but fail to capture the complexity of nuanced B2B interactions.

Adding to these challenges, customers often face repeated survey requests, further reducing the quality of feedback.

Survey Fatigue in Long-Running B2B Cases

Customers are regularly inundated with feedback requests, from dining experiences to ride-sharing services and beyond. In long-term B2B relationships, this constant flow of surveys leads to "survey fatigue." Over time, customers either ignore these requests entirely or provide random ratings just to get through the process, introducing noise into the data. Tod Famous, Chief Product Officer at Crescendo, explains:

"Surveys were built for convenience, not accuracy. The fixed-choice structure simplifies analysis but distorts reality." [7]

This cycle of repetitive requests not only lowers response rates but also discourages meaningful engagement, making it harder to gather actionable insights.

Even when customers do respond, the delayed nature of traditional surveys poses another hurdle.

Delayed Feedback That Limits Action

Traditional surveys often deliver feedback days or even weeks after an interaction[2]. By the time the results are in, the opportunity for timely intervention has already passed. This retrospective approach forces teams to respond to past issues rather than addressing them in real time. Jared Ellis, Senior Director of Global Product Support at Culture Amp, shares his experience:

"I haven’t had a single CSAT response that I’ve been able to coach one of my team members on for about two or three months… We were worrying more about the metric than we were about the feedback." [6]

For the roughly 80% of customers who never respond, this delay means their concerns remain unaddressed, leaving teams unable to take proactive steps to resolve emerging problems.

| Feature | Traditional CSAT | Predictive AI CSAT |

|---|---|---|

| Coverage | 5–20% of interactions[4] | 100% of interactions[4] |

| Feedback Bias | High (captures extremes only)[1] | Low (unbiased analysis)[4] |

| Timing | Delayed (days or weeks)[2] | Real-time[2] |

| Actionability | Reactive (after the fact)[2] | Proactive (during interaction)[1][2] |

The limited scope and delayed nature of traditional CSAT surveys leave a significant gap in understanding customer sentiment. This blind spot can hinder timely support and prevent teams from addressing issues as they arise.

What is Predictive CSAT and How Does It Work?

Predictive CSAT leverages AI and machine learning to evaluate customer satisfaction by analyzing interactions – like emails, chats, voice transcripts, and operational data – without needing traditional surveys[1]. Instead of relying on feedback from the small percentage of customers who respond to surveys, predictive models use conversational data, behavior patterns, and historical trends to estimate satisfaction for every customer after each interaction.

The system examines various factors, including sentiment, tone, and keywords (like "frustrated" or "thank you"), as well as operational details such as response times and case durations. Historical survey data is also used to link these patterns to likely satisfaction levels[1][2]. Eric Klimuk, Founder and CTO at Supportbench, highlights the benefit:

"Predictive scores give you visibility into the likely experience of the ~80% of customers who don’t respond to surveys."[1]

When properly implemented, these predictive models can achieve accuracy rates of 80–90% when compared to actual survey results[2]. This allows businesses to assess satisfaction across their entire customer base, not just the small group that provides direct feedback.

How AI Analyzes Customer Interactions

AI dives deep into both the content of customer interactions and supporting operational metrics to identify satisfaction signals. It processes emails, chat logs, and voice transcripts to assess sentiment, emotional tone, keywords, and language subtleties[1]. Behavioral indicators – like interruptions, changes in sentiment during the conversation, response delays, or a customer asking for a human agent – are also tracked[2].

Beyond the conversational data, operational metadata is factored in, such as how long a case takes to resolve compared to its complexity, how many replies an agent sends, time to first response, and whether the case involved escalations or transfers[1]. Advanced large language models (LLMs) further evaluate qualitative elements like whether the customer felt understood or if their issue was resolved[3]. All these insights are combined into a single predictive score that reflects overall satisfaction.

Real-Time vs. Retrospective Feedback

One standout feature of predictive CSAT is its ability to provide immediate feedback. Unlike surveys that might arrive days or weeks after an interaction, predictive models generate satisfaction scores in real-time or shortly after an interaction ends[2]. This immediacy enables supervisors to take action – like initiating live coaching or recovery efforts – while the customer is still engaged, preventing issues from escalating.

Cresta emphasizes this proactive approach:

"Predictive CSAT analyzes virtually all customer interactions in real-time, generating satisfaction predictions during conversations while agents can still act on the insights."[2]

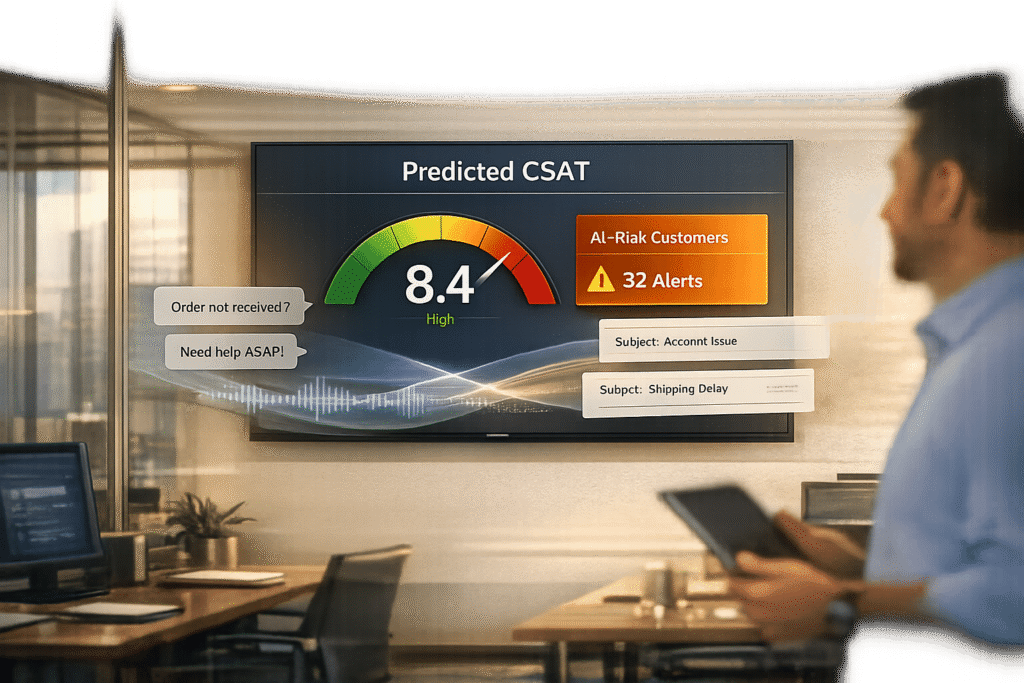

For B2B support teams handling high-value accounts, this capability acts as an early warning system. low scores can trigger automated alerts to Customer Success Managers (CSMs), allowing them to intervene before the situation worsens or leads to customer churn[1]. This shifts satisfaction measurement from merely reflecting past performance to becoming a vital tool for real-time customer care.

Core AI Techniques for Predictive CSAT

Predictive CSAT uses three main AI techniques to gauge customer satisfaction, focusing on different aspects of support interactions. These methods analyze everything from the emotional tone of conversations to operational patterns that might indicate frustration. By understanding how these techniques work, B2B support teams can apply them effectively and interpret their results with confidence.

Sentiment Analysis and Emotional Trajectory

AI-driven sentiment analysis evaluates the language customers use in support interactions to gauge satisfaction levels in real time. Advanced models go a step further by tracking the emotional trajectory to pinpoint when satisfaction drops. This includes analyzing word choices, interruptions, and response times[2].

For example, KOHO’s User Success Department adopted predictive CSAT and real-time sentiment analysis between 2024 and 2025. Monika Aufdermauer, VP of User Success, referred to the system as an "early warning system", enabling proactive interventions before issues escalated. Within 90 days, KOHO saw its CSAT score jump from 4.1 to 4.6 – a 15% improvement in overall satisfaction[8].

One key advantage of sentiment analysis is its broad coverage. While traditional CSAT surveys capture feedback from just 5–20% of customers, sentiment analysis evaluates 100% of interactions[1][4]. This reveals insights from the "silent majority" – the 80% of customers who don’t respond to surveys but whose satisfaction is still critical, especially in B2B settings where each account represents significant revenue[1][4]. Beyond sentiment, AI can also predict escalations in real time, flagging high-risk cases early.

Escalation and Intent Prediction

Escalation prediction focuses on identifying interactions at risk of turning into major issues before customers voice dissatisfaction. By analyzing ticket text, metadata, and historical trends, AI flags signs of potential escalations, cancellations, or churn[9][1]. For instance, a noticeable drop in satisfaction during a live chat triggers immediate alerts for senior agents to step in[2].

The reliability of these models is impressive, with historical data-trained models achieving 88% accuracy in predicting likely escalations[9]. When applied effectively, this technology can reduce escalation rates by 45% and save support teams 86% of their time – cutting response times from 10–22 hours to just 1–3 hours[9].

For B2B support teams, this proactive method shifts satisfaction measurement from being a backward-looking metric to a real-time tool for action. If a high-value account is flagged with a "Predicted Dissatisfied" score, automated alerts notify Customer Success Managers immediately. This allows for timely intervention before the situation deteriorates[1]. AI also recommends "Next Best Actions", such as specific outreach templates or resource suggestions, to resolve problems faster[9].

Behavioral and Resolution Data Modeling

AI doesn’t just focus on what customers say – it also examines how interactions unfold. Behavioral data modeling evaluates interaction metadata like case duration, transfer frequency, and escalation patterns to infer satisfaction levels[1][9].

Certain patterns stand out as red flags. For example, slow response times, interruptions, and repetitive exchanges often indicate customer frustration. Similarly, high transfer counts, SLA breaches, and frequent ticket reopens suggest unresolved issues[1][9].

Resolution effectiveness is another critical factor. AI tracks whether standard procedures were followed and if the problem was genuinely solved – not just closed. Machine learning models compare these behavioral patterns with historical data where survey scores exist, assigning predicted CSAT scores to the majority of interactions that typically lack direct feedback[1]. When compared to actual survey responses, these models achieve 80–90% accuracy[2].

| Data Category | Specific Metrics Analyzed | What It Reveals |

|---|---|---|

| Interaction Metadata | Case duration, number of replies, transfers, escalations | High transfer counts or lengthy discussions often signal low satisfaction |

| Operational Context | SLA status, queue aging, reopen counts | Breached SLAs and frequent reopens indicate unresolved frustration |

| Behavioral Cues | Response timing, interruptions, conversation flow | Slow responses or repetitive exchanges suggest high customer effort |

| Resolution Data | Resolution quality, adherence to procedures | Failure to fully solve the issue results in negative scores |

Implementing Predictive CSAT with Supportbench

Supportbench takes predictive CSAT methodologies and integrates them directly into everyday support operations. By embedding Predictive CSAT into its platform, Supportbench removes the need for separate tools and eliminates the delays caused by traditional surveys. This approach allows teams to address customer concerns in real time, shifting satisfaction measurement from a reactive process to one that evaluates every customer interaction.

Built-In AI Capabilities

Supportbench’s AI technology evaluates interaction text, metadata, and historical data to predict customer satisfaction as it happens. These predictive scores are displayed right in the helpdesk interface, giving agents immediate insights within their queues and case details.

The platform goes beyond simple scoring by providing AI-generated case summaries that explain the reasoning behind each prediction. These summaries highlight specific pain points and process issues, helping managers quickly understand customer dissatisfaction without needing to sift through entire transcripts. Eric Klimuk, Founder and CTO of Supportbench, explains:

"This isn’t about mind-reading; it’s about data-driven inference based on learned correlations between interaction characteristics and likely outcomes."

Supportbench also includes Predictive Customer Effort Score (CES) and First Contact Resolution (FCR) detection capabilities. These tools measure support quality by identifying high-effort interactions, offering a more detailed picture than traditional quality assurance methods. Together, these features support proactive workflows that improve the overall customer experience.

Comparison: Traditional CSAT vs. Predictive CSAT

| Feature | Traditional CSAT Surveys | Supportbench Predictive CSAT |

|---|---|---|

| Coverage | Limited (≈20% response rate) | Universal (nearly 100% of interactions) |

| Data Source | Direct customer feedback (often biased) | Interaction text, metadata, and agent actions |

| Timing | Reactive (post-interaction) | Proactive (real-time or at case closure) |

| Bias | High (only extreme sentiments captured) | Low (systematic analysis of all data) |

| Actionability | Delayed; requires customers to speak up | Immediate; triggers alerts based on hidden signals |

| Context | Requires customer comments to understand "why" | Uses AI summaries to provide immediate context |

Source: [1]

Traditional CSAT surveys often struggle to exceed a 20% response rate, meaning the majority of customer interactions go unmeasured. Supportbench addresses this gap by analyzing every interaction objectively, removing the reliance on voluntary customer feedback.

Using Predictions in Daily Workflows

Supportbench transforms predictive insights into actionable steps through automation and seamless integration with other platforms. For instance, cases flagged with "Predicted Dissatisfied" or "Predicted High Effort" scores can automatically trigger manager reviews or create tasks in CRM and Customer Success platforms like Gainsight or Catalyst. This allows teams to intervene before customers decide to leave.

Eric Klimuk emphasizes:

"Predictive scores enable immediate intervention."

Support leaders use these insights for targeted coaching, identifying agents who frequently receive low scores. AI-generated summaries make it easier to deliver clear, actionable feedback to agents. Teams can also analyze recurring issues tied to "Predicted High Effort" scores, leading to process improvements such as better documentation or product updates.

For Customer Success Managers, predictive scores provide a complete view of account health by analyzing all support interactions – not just the ones that receive survey responses. This comprehensive perspective helps CSMs address potential risks during regular check-ins, bridging the gap between support quality and customer retention. This reinforces the importance of proactive, AI-driven customer care in maintaining strong relationships.

Best Practices for Training and Using Predictive Models

Building effective predictive CSAT models starts with using historical survey data to train them. This approach addresses the challenge of low survey response rates by filling in the gaps with data-driven insights.

Training Models with Historical Survey Data

The foundation of accurate predictive CSAT models lies in historical survey data. By learning from past customer interactions – where survey responses were provided – AI can identify patterns in language, AI-driven sentiment, resolution steps, and eventual satisfaction ratings. This training helps the model predict satisfaction levels based on similar scenarios.

To get started, define classifiers to evaluate key aspects like comprehension, sentiment, resolution, and overall satisfaction. Use large language model (LLM) prompts to capture qualitative factors such as tone and understanding. Aim for specific accuracy benchmarks: 85%+ for behavioral tasks, 75–85% for sentiment detection, and 65–75% for more complex satisfaction assessments [3]. These targets ensure the model supports real-time decision-making, aligning with the need for proactive customer service.

MaestroQA suggests starting with an unweighted average of all approved classifiers to create a baseline [3]. Once this baseline is validated, apply weighted scores to prioritize the most accurate classifiers or those tied to critical business outcomes. For calibration, use a clean dataset of at least 200+ interactions, combining predictive scores with actual survey responses to measure correlation. A strong initial model should achieve a 70%+ correlation, while more refined models can aim for 75%+ or higher [3].

"The reality is that AI models are only as good as the data they receive, and require customisation." – Weavely AI [11]

To ensure your model reflects your business’s unique needs, include data from both successful and unsuccessful customer journeys. For example, interactions with churned or disengaged customers can teach the model to differentiate between healthy and at-risk accounts [11]. Once the model is trained, continuous monitoring and fine-tuning are necessary to maintain its performance.

Continuous Validation and Bias Reduction

After training, ongoing validation is crucial to maintain accuracy and fairness. Predictive models rely on measuring the correlation between their scores and actual survey responses over time. If discrepancies arise, such as false positives (high predictive score but low satisfaction), investigate them thoroughly. These cases often reveal that the AI mistook technical resolution for customer satisfaction – while the issue was resolved, the customer may have been frustrated by the process [3].

Address these gaps by refining prompts and adjusting classifier weights. This iterative process improves accuracy and ensures the model aligns more closely with genuine customer sentiment. Test changes against human judgment, especially for edge cases, and set accuracy thresholds based on task complexity. For subjective questions like "Was the customer delighted?" expect accuracy to fall within the 65–75% range [3].

Predictive CSAT tackles response bias found in traditional surveys by analyzing 100% of interactions, including those from customers who don’t respond to surveys. This approach highlights the sentiment of the "silent majority", offering a more complete view of customer satisfaction [4].

To avoid overwhelming your team, calibrate alert thresholds carefully. Striking the right balance ensures critical risks are flagged without triggering unnecessary notifications [10]. Work with support managers to fine-tune these thresholds, allowing AI to enhance – not replace – human judgment. By combining predictive insights with manager expertise, you can make well-informed decisions and take effective action.

Measuring the Business Impact of Predictive CSAT

Predictive CSAT isn’t just a tool for better understanding customer satisfaction – it’s a way to drive measurable business outcomes. By addressing gaps in traditional methods, it helps protect revenue and streamline operations.

Tracking Accuracy and Satisfaction Trends

The first step is ensuring your predictive CSAT model works as intended. A strong model should show a 70-75%+ correlation between predicted scores and actual survey responses [3]. This confirms the system is reliable and ready for action.

Unlike traditional surveys that capture feedback from only a small sample, predictive CSAT evaluates 100% of interactions [3][4]. This means you’re not just hearing from the vocal minority but also uncovering trends among the "silent majority" who typically don’t respond to surveys. With this complete view, you can identify satisfaction drops in specific products, teams, or customer segments before they lead to bigger problems.

"Relying solely on biased survey data gives an incomplete picture of agent and team performance. Predictive scores allow you to measure agent performance and organizational strength based on the likely outcome of all their interactions." – Eric Klimuk, Founder and CTO, Supportbench [1]

Accuracy benchmarks also matter. Behavioral classifiers (e.g., "Did the agent solve the issue?") should hit 85%+ accuracy, sentiment detection should be 75-85%, and complex satisfaction predictions typically range from 65-75% [3]. Meeting these benchmarks ensures your system provides actionable insights instead of noise.

With these validated metrics, you’re equipped to take proactive steps – like reducing escalations and fine-tuning coaching strategies.

Reducing Escalations and Improving Coaching

One of the standout benefits of predictive CSAT is its ability to flag at-risk interactions in real-time. This allows managers to step in before customers escalate or churn. Companies using these systems have seen churn drop by 35% and negative CSAT incidents decrease from 18% to 5% [13]. Additionally, AI-driven interventions have boosted resolution efficiency by 25% and cut resolution times by 40% [13].

To make this work, set up automated alerts for "Predicted Dissatisfied" scores, particularly for high-value accounts. If the system detects frustration during an interaction, route the case to experienced agents or retention specialists immediately [2]. By addressing issues in the moment, you prevent escalations rather than reacting after the fact.

For coaching, predictive scores provide a clearer picture of agent performance. Instead of randomly sampling tickets, you can analyze 100% of an agent’s interactions [4]. For example, if an agent consistently struggles with billing inquiries, you can focus training on that specific area. This targeted approach eliminates guesswork and provides actionable feedback that improves performance.

Both real-time interventions and data-driven coaching lead to better operational efficiency and a noticeable return on investment.

Proving ROI with Measurable Metrics

The business case for predictive CSAT is clear: it protects revenue and reduces costs. Organizations using predictive analytics have seen churn risks drop by 60% [13], safeguarding their recurring revenue. Some have even improved CSAT scores by 15% in under 90 days [8].

"Predicted CSAT and real-time sentiment analysis is our early warning system, allowing us to identify at-risk customers and intervene before they even reach out with an issue. Why wait for someone to be upset? With TheLoops predictive CSAT, we went from 4.1 to 4.6 in less than 90 days." – Monika Aufdermauer, VP of User Success, People+Culture [8]

Cost savings come from automating quality management. AI-powered systems eliminate the need for manual ticket sampling, reducing QA overhead while covering all interactions [2][3]. Companies leveraging AI trained on their own data are 3.5 times more likely to cut costs [12]. Some report a 40% drop in operational costs and a 50% boost in efficiency [12].

To prove ROI, track these key metrics:

- Correlation between predicted and actual CSAT scores

- Percentage of at-risk customers flagged before escalation

- Reduction in churn among flagged accounts

- Improvements in agent performance over time

- Decrease in manual QA hours

Conclusion

Traditional CSAT surveys are no longer cutting it for modern B2B support teams. They leave you in the dark about most customer interactions, capturing only a fraction of the bigger picture[1][4]. Predictive CSAT changes the game by analyzing every single conversation in real time, giving you a complete and up-to-date view of customer satisfaction.

The numbers speak for themselves: AI-driven predictive models can improve CSAT scores by 15% in under 90 days[8]. By spotting at-risk accounts early, offering tailored coaching based on full interaction histories, and analyzing sentiments to uncover the root causes of dissatisfaction, these tools help support teams resolve issues before they spiral out of control.

This approach transforms how customer satisfaction is measured and managed. Instead of reacting days later, you can intervene while the conversation is still happening.

For B2B teams handling complex accounts and lengthy cases, predictive CSAT provides an early-warning system that safeguards high-value relationships. Whether it’s reducing survey fatigue, simplifying quality assurance, or finally understanding the "silent majority" of customers, this AI-powered method gives you the speed and visibility needed to stay ahead.

It’s time to leave outdated surveys behind and embrace a solution that delivers real-time insights into customer sentiment.

FAQs

What data do I need to start predictive CSAT?

To implement predictive CSAT, you’ll need to gather data that goes beyond traditional survey responses. Here’s the type of data to focus on:

- Customer interactions: Analyze emails, chats, and calls for sentiment, tone, and the level of effort customers experience during their interactions.

- Conversation details: Pay attention to text content, voice tone, and subtle behavioral cues that can reveal customer emotions.

- Experience signals: Look at metrics such as resolution quality and overall engagement to gauge satisfaction.

- Behavioral data: Study patterns like product usage, engagement trends, and escalation rates to uncover deeper insights into customer satisfaction.

This broader range of data helps paint a more complete picture of how customers feel, even without direct survey feedback.

How do we validate predictive CSAT is accurate for us?

To check how well predictive CSAT works, compare its predictions with actual customer feedback over time. If traditional survey results are available, see how closely they match the AI-generated scores. Keep an eye on important metrics like retention, churn rates, and escalations to see if they align with the predictive scores. Make it a habit to review and adjust the model regularly based on the results, ensuring it stays accurate and useful for your customers.

How should teams use low predicted CSAT alerts day-to-day?

Teams can leverage low predicted CSAT alerts to tackle potential customer dissatisfaction before it spirals into bigger problems. These alerts pinpoint interactions that might lead to unhappy customers, giving agents the chance to step in early and resolve concerns. By keeping a close eye on these warnings, teams can allocate resources wisely, follow up with customers who might need extra attention, and take specific actions to boost satisfaction. This proactive approach helps reduce churn, improve overall experiences, and avoid unnecessary escalations.